Chris Pollett > Students > Pundi Muralidharan

Print View

[Bio]

[Blog]

[Deliverable 1 - WebGL Program: The Logic Behind]

[Deliverable 2 - The Study of OSM Data and Vector Tiles]

[Deliverable 3 - Importing OSM Data into Postgres Database]

[Deliverable 4 - Study of Current Tile Generators in Trend]

[CS 298 Report-Intermediate - PDF]

[CS 298 - Summary of Intermediate Results - PDF]

[CS 298 Project Source Code - ZIP]

Deliverable 1 - WebGL Program: The Logic Behind

Description: This deliverable was to create image texture maps using WebGL. Texture mapping refers to the process of applying (mapping) a texture image to the surface of an object. Texture mapping is done to produces textures that give the look and feel of real-world objects and where interpolating with colors might be a tedious task. The pixels that make up the texture image are called texels (texture elements), and each texel codes its color information in the RGB or RGBA format.The process of texture mapping affects both vertex an fragment shaders (if a shader program is being used). This is because, it applies the texture coordinates to each vertex and then applies the corresponding pixel color extracted from the texture image to each fragment. Here, two images, smiley.jpg and reachup.jpg are used for creating the textures. These images are overlapped onto each other, to create a new texture. This deliverable is an effort to convert the HelloWorldOpenGL program, from the book, "Foundations of 3D Computer Graphics", by Steven J. Gortler, into a corresponding WeBGL program.

Explanation of code: The deliverable consists of 3 different files, HelloWorldWebGL.html, HelloWorldWebGL.js and shader-utils.js. The HTML part of the code defines the canvas needed to draw the texture-mapped images and specifies the URL of other javascript files. TheHelloWorldWebGL.html file contains the following piece of code:

<!DOCTYPE html>

<html lang="en">

<head >

<meta charset="utf-8" />

<title>Hello World WebGL</title>

</head>

<body onload="main()">

<canvas id="myWebGLContext" width="512" height="512">

</canvas>

<script src="webgl-debug.js"></script>

<script src="webgl-utils.js"></script>

<script src="shader-utils.js"></script>

<script src="HelloWorldWebGL.js"></script>

</body>

</html>

The above html code defines the canvas on top of which the WebGL drawing is done. The canvas is given an id myWebGLContext which is 512 x 512 in size. Further, the HTML code, specifies the URL of the external javascript files such as HelloWorldWebGL.js, shader-utils.js, webgl-utils.js and webgl-debug.js

WebGL's error reporting mechanism involves calling getError and checking for errors. Having to put a getError call after every WebGL function call is difficult and can increase redundant lines of code, there is a small library to help with make this easier.

The webgl-utils.js file contains functions every webgl program will need a version of one way or another. For example, this file contains functions for setting up a WebGL context and messages that should be displayed on getting a browser that supports or doesn't support WebGL.

The following are the vertex and fragment shaders used in the program.

var VSHADER_SOURCE =

'attribute vec4 a_Position;\n' +

'attribute vec2 a_TexCoord;\n' +

'varying vec2 v_TexCoord;\n' +

'void main() {\n' +

' gl_Position = a_Position;\n' +

' v_TexCoord = a_TexCoord;\n' +

'}\n';This code defines the vertex shader that specifies the texture coordinates. These are then passed on to the fragment shader. The fragment shader has the following code:

var FSHADER_SOURCE =

'#ifdef GL_ES\n' +

'precision mediump float;\n' +

'#endif\n' +

'uniform sampler2D u_Sampler0;\n' +

'uniform sampler2D u_Sampler1;\n' +

'varying vec2 v_TexCoord;\n' +

'void main() {\n' +

' vec4 color0 = texture2D(u_Sampler0, v_TexCoord);\n' +

' vec4 color1 = texture2D(u_Sampler1, v_TexCoord);\n' +

' gl_FragColor = color0 * color1;\n' +

'}\n';This pastes the texture image into the geometric shape specified. The texture coordinates are then specified in the initVertexBuffers() method.

function initVertexBuffers(gl) {

var verticesTexCoords = new Float32Array([

// Vertex coordinate, Texture coordinate

-0.5, 0.5, 0.0, 1.0,

-0.5, -0.5, 0.0, 0.0,

0.5, 0.5, 1.0, 1.0,

0.5, -0.5, 1.0, 0.0,

]);

var n = 4; In the above lines, the vertex coordinates (-0.5, 0.5) are mapped to their corresponding texture coordinates (0.0, 1.0), and so on. The following lines then define a buffer object using which, the positions of the vertices are written into the vertex shader:

var vertexTexCoordBuffer = gl.createBuffer();

if (!vertexTexCoordBuffer) {

console.log('Failed to create the buffer object');

return -1;

}

// Write the positions of vertices to a vertex shader

gl.bindBuffer(gl.ARRAY_BUFFER, vertexTexCoordBuffer);

gl.bufferData(gl.ARRAY_BUFFER, verticesTexCoords, gl.STATIC_DRAW); The next step is to get the storage locations of the position of the vertices and the texture coordinates and load them into the buffer. The following lines of code help us achieve that:

var FSIZE = verticesTexCoords.BYTES_PER_ELEMENT;

//Get the storage location of a_Position, assign and enable buffer

var a_Position = gl.getAttribLocation(gl.program, 'a_Position');

if (a_Position < 0) {

console.log('Failed to get the storage location of a_Position');

return -1;

}

gl.vertexAttribPointer(a_Position, 2, gl.FLOAT, false, FSIZE * 4, 0);

gl.enableVertexAttribArray(a_Position); // Enable the assignment of the buffer object

// Get the storage location of a_TexCoord

var a_TexCoord = gl.getAttribLocation(gl.program, 'a_TexCoord');

if (a_TexCoord < 0) {

console.log('Failed to get the storage location of a_TexCoord');

return -1;

}

gl.vertexAttribPointer(a_TexCoord, 2, gl.FLOAT, false, FSIZE * 4, FSIZE * 2);

gl.enableVertexAttribArray(a_TexCoord); // Enable the buffer assignment

The next step is to prepare the texture image for loading, and request the browser to read it. This is done by the initTextures() method.

var texture0 = gl.createTexture();

var texture1 = gl.createTexture();

if (!texture0 || !texture1) {

console.log('Failed to create the texture object');

return false;

}

The above code creates a texture object (gl.createTexture()) for managing the texture image in the WebGL system. The line below gets the storage location of a uniform variable (gl.getUniformLocation()) to pass the texture image to the fragment shader():

var u_Sampler0 = gl.getUniformLocation(gl.program, 'u_Sampler0');

var u_Sampler1 = gl.getUniformLocation(gl.program, 'u_Sampler1');

if (!u_Sampler0 || !u_Sampler1) {

console.log('Failed to get the storage location of u_Sampler');

return false;

}

In the next step, its necessary to request that the browser load the image that will be mapped to the rectangle. An image object is required for this purpose. The following lines of code illustrate this.

var image0 = new Image();

var image1 = new Image();

if (!image0 || !image1) {

console.log('Failed to create the image object');

return false;

}

// Register the event handler to be called when image loading is completed

image0.onload = function(){ loadTexture(gl, n, texture0, u_Sampler0, image0, 0); };

image1.onload = function(){ loadTexture(gl, n, texture1, u_Sampler1, image1, 1); };

// Tell the browser to load an Image

image0.src = 'smiley.jpg';

image1.src = 'reachup.jpg';

Next, the loadTextures() function has to be modified as follows:

function loadTexture(gl, n, texture, u_Sampler, image, texUnit) {

gl.pixelStorei(gl.UNPACK_FLIP_Y_WEBGL, 1);// Flip the image's y-axis

// Make the texture unit active

. . .

// Bind the texture object to the target

gl.bindTexture(gl.TEXTURE_2D, texture);

// Set texture parameters

gl.texParameteri(gl.TEXTURE_2D, gl.TEXTURE_MIN_FILTER, gl.LINEAR);

// Set the image to texture

gl.texImage2D(gl.TEXTURE_2D, 0, gl.RGBA, gl.RGBA, gl.UNSIGNED_BYTE, image);

. . .

if (g_texUnit0 && g_texUnit1) {

gl.drawArrays(gl.TRIANGLE_STRIP, 0, n); // Draw the rectangle

}

}

In loadTexture(), predicting which texture image is loaded first is difficult, because the browser loads them asynchronously. The sample program handles this by starting to draw only after loading both textures. To do this, it uses two global variables (g_texUnit0 and g_texUnit1) indicating which textures have been loaded. These variables are initialized to false at initially and changed to true in the if statement. This if statement checks the variable texUnit passed as the last parameter in loadTexture(). If it is 0, the texture unit 0 is made active and g_texUnit0is set to true; if it is 1, the texture unit 1 is made active and then g_texUnit1 is set to true. Following this, gl.uniform1i(u_Sampler, texUnit) sets the texture unit number to the uniform variable.

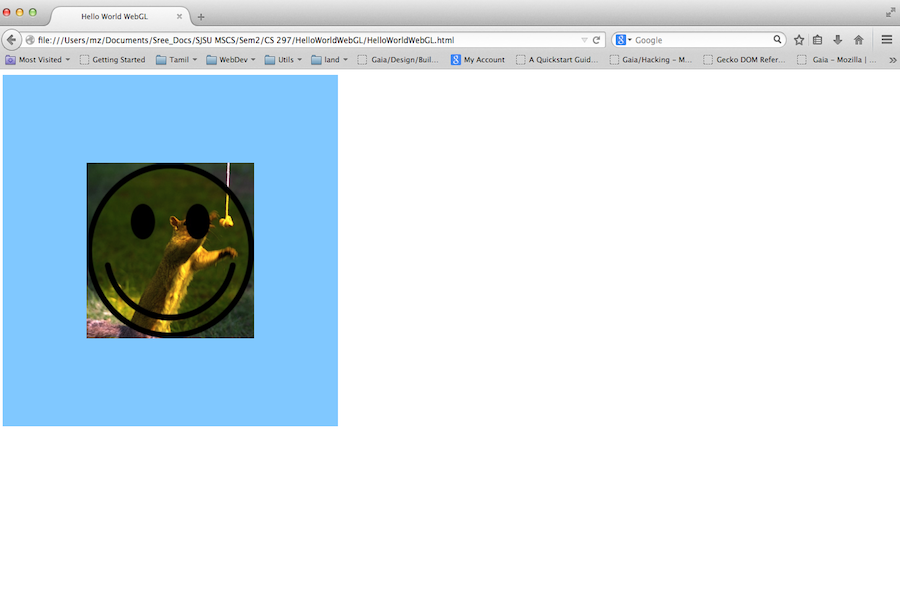

Finally, the program invokes the vertex shader after checking whether both texture images are available using g_texUnit0 and g_texUnit1. The images are then combined as they are mapped to the shape, resulting in the screenshot as follows:

Figure: Screenshot of the WebGL program that uses texture-mapping