Char-gramming, Language Processing, Static Inverted Indices

CS267

Chris Pollett

Sep 25, 2019

CS267

Chris Pollett

Sep 25, 2019

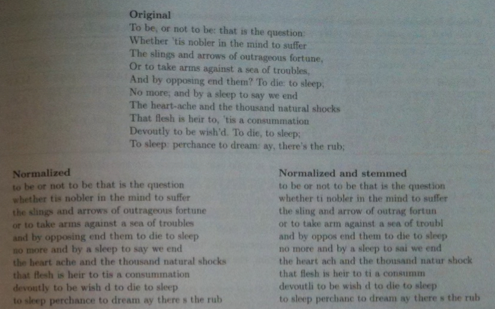

sses -> ss ies -> i ss -> ss s ->All the rules are applied in sequence, if there was a change they are reapplied until no change. The result is the stemmed term.