Outline

- Operational Business Intelligence Systems

- Data Integration Patterns

- In-Class Exercise

- Process Integration

Introduction

- Last day, we said data integration is the general set of techniques that allow us to create a unified view of data from different sources.

- These techniques have evolved over the history of using databases for businesses.

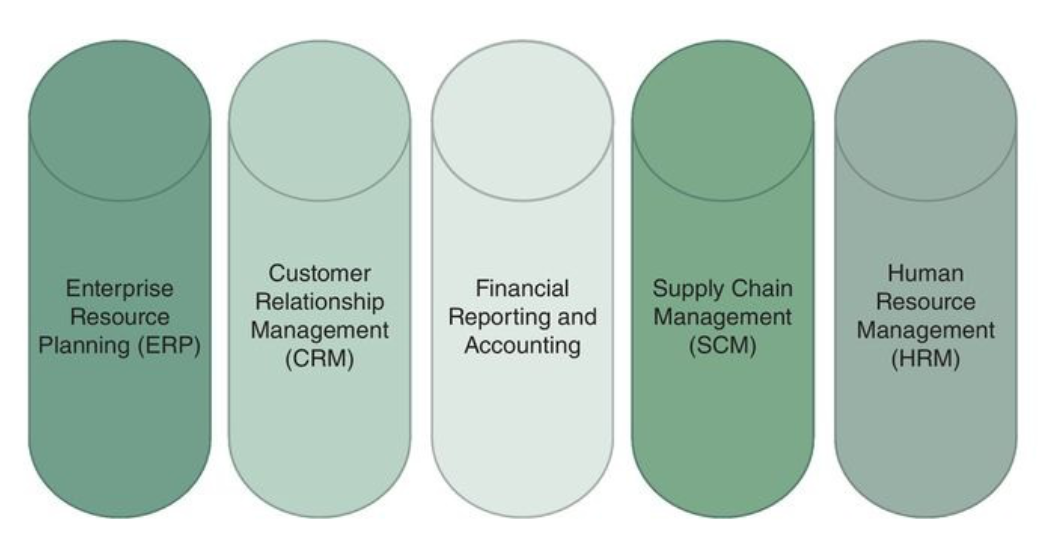

- Originally, it was common that a different database might be used by each different department or organizational unit of a company, creating so-called data silos that couldn't inter-operate with each other (see left figure above).

- These would handle operational processes such as point-of-sale (PoS) storage of product purchases.

- Later, companies tried to combine these silos to support decision aspects of their business (i.e., business intelligence).

- This led to the development of data warehouses as we saw in the 1990s.

- Data warehouses allow business managers to make decisions that might be useful on the order of days, weeks, or months, but don't allow business to react to real-time changes in demand.

- They also tend to support only high level decisions, they are not suited to targeting individual consumers based on predicted demand.

- This led to the development of operational business intelligence systems - systems where operational and analytic processes are tightly integrated.

- We start today by describing and considering examples of such systems.

Operational Business Intelligence Systems

- Operational BI systems require low or zero latency so unexpected business-altering events and trends can be immediately detected and addressed.

- These systems move more and more of the batch processing involved in ETL to near real-time.

- Some examples of such systems are:

- Executive dashboards that monitor KPIs (key performance indicators, such as production, stock prices, oil prices)

- Business Process Monitoring or Business Activity Monitoring (BAM) that provide timely detection tools for anomalies or business opportunities.

- Big Data Analytics often tries to combine traditional operational BI data with internal and external data which can be more or less volatile such as clickstreams, server logs, sensor data, social media feeds, ... (i.e., streaming data.)

- Integrating data across these different kinds of resources at high speed often results in trade-offs between quality-of-service and quality-of-data.

- We next look at integration patterns that are used under different trade-off requirement scenarios.

Data Integration Patterns 1

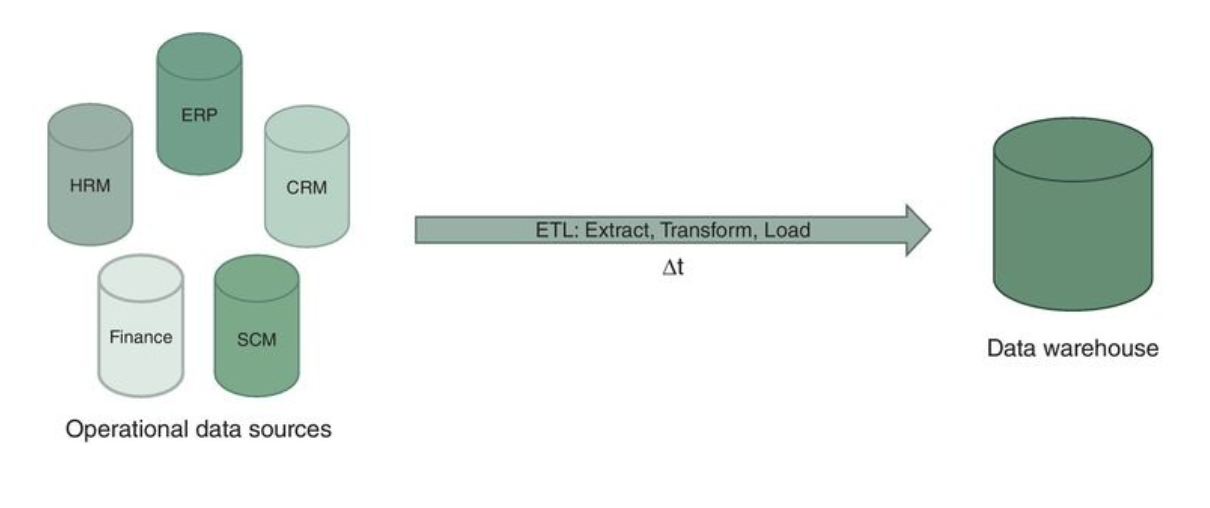

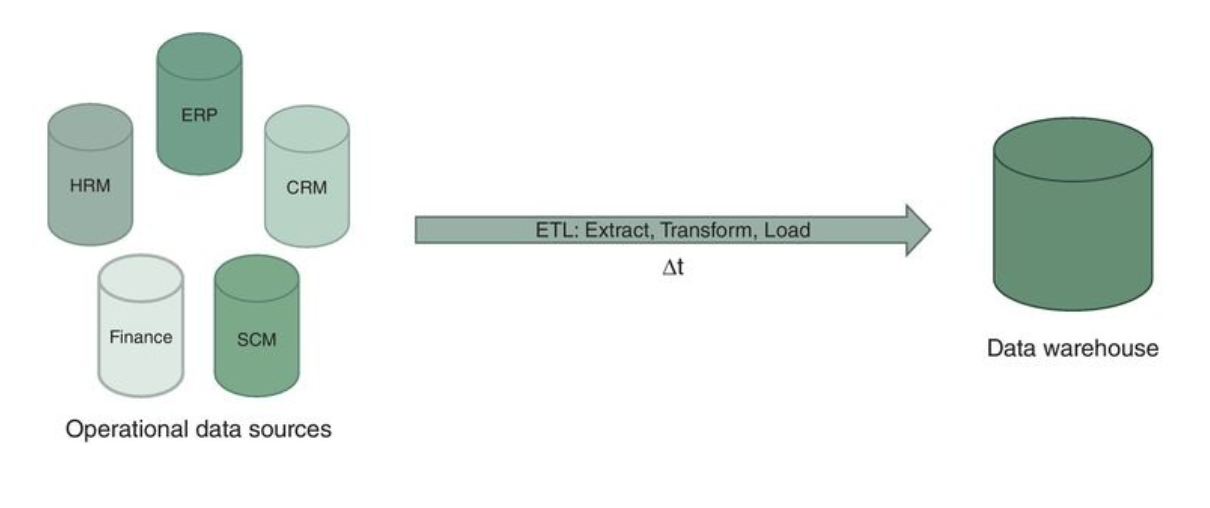

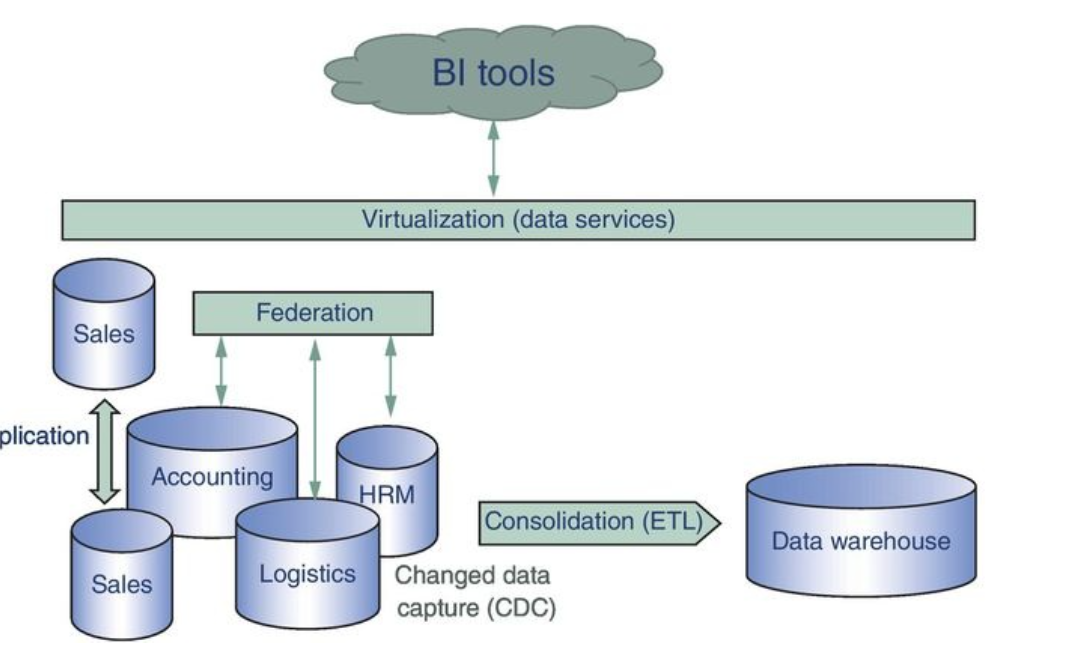

- Data consolidation - capture data from multiple, heterogeneous source systems and integrate it into a single persistent store. This is the data warehouse/data mart approach that we have already considered. It involves an extraction, transformation and loading step, so tends to be higher latency, but have good data quality.

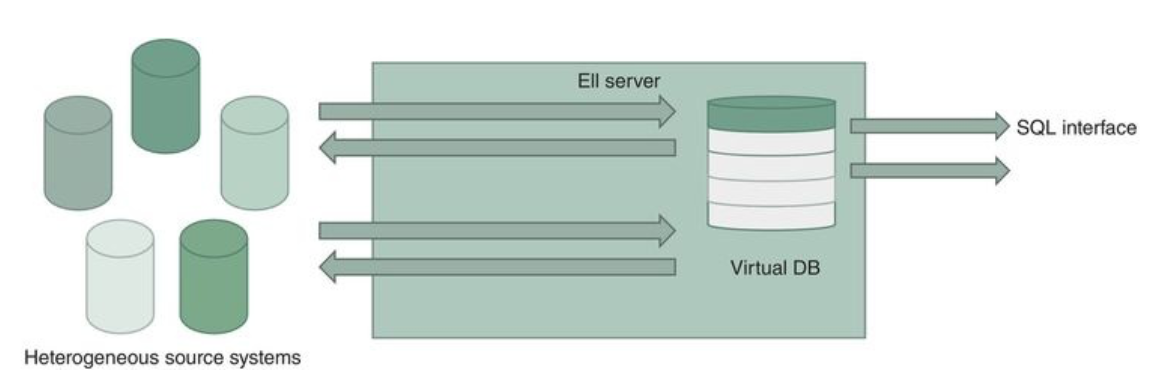

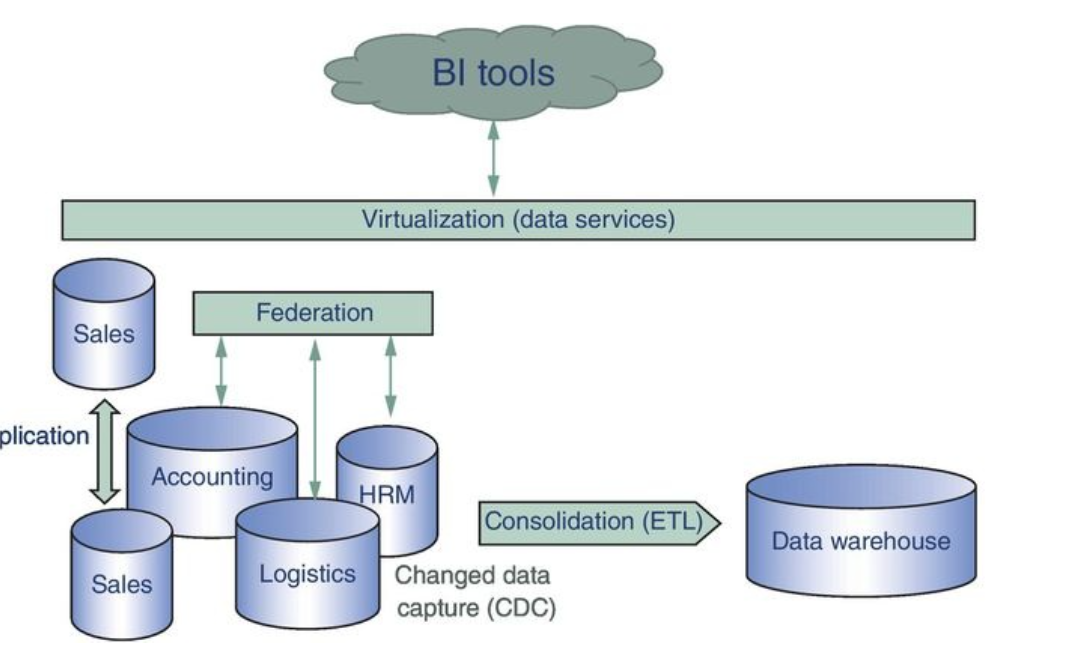

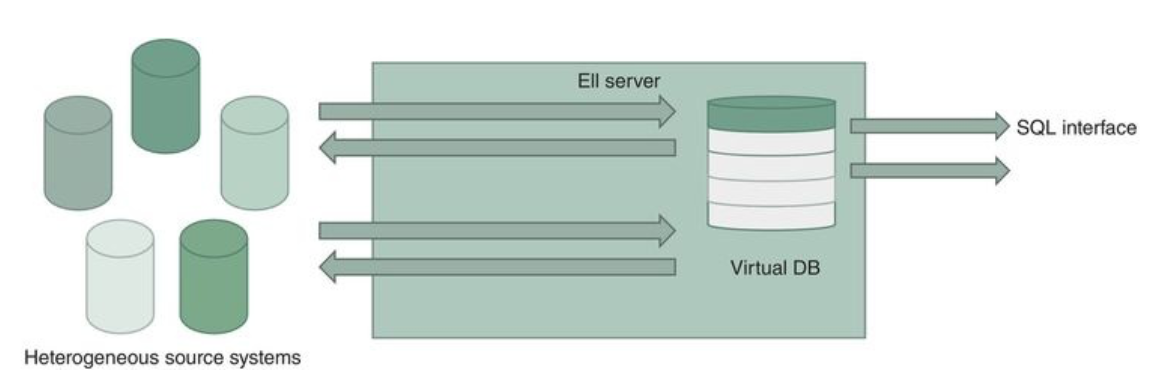

- Data federation use a pull approach from underlying systems to integrate them on an demand basis. One example of this is Enterprise information integration (EII) where a virtual business view (with often a SQL interface) is presented based on underlying data sources. These data sources' internals are isolated from the outside world via wrappers. Queries performed on the business view are transformed into queries on the wrapper interfaces and executed on the underlying system and the results returned and combined back to the business view. This tends to be slower and not work well with complex queries.

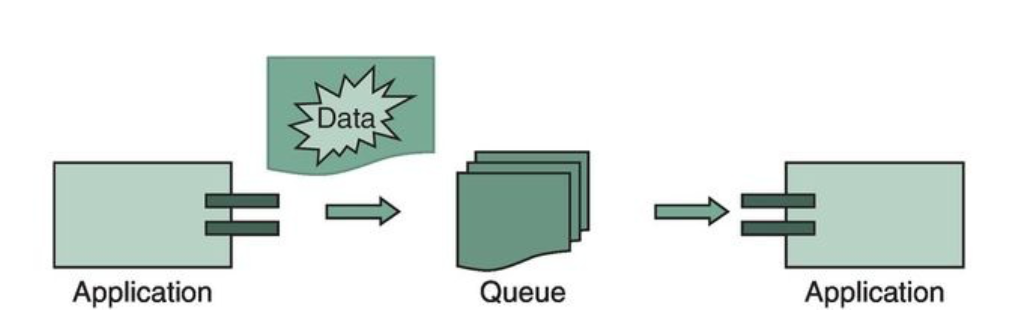

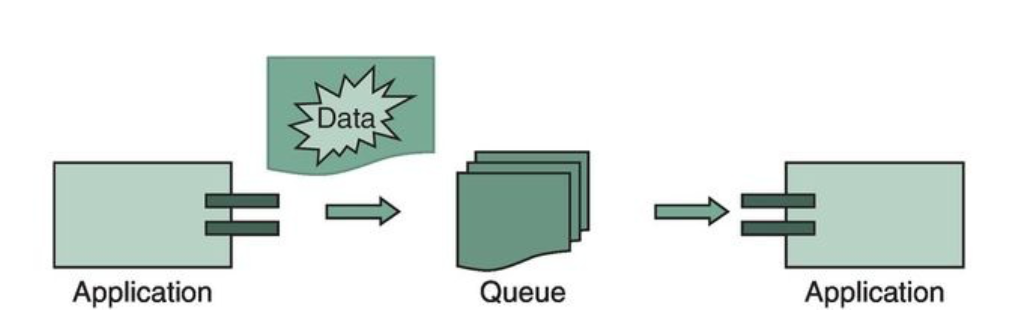

- Data propagation - either synchronously or asynchronously propagate updates from a source to target system. This can either be done between two or more enterprise applications (enterprise application integration (EAI)) or between two or more data stores (enterprise data replication (EDR)). An example of EAI might be an order-handling application that triggers an invoicing application. This might be implemented using web services, RPC, or middleware. Data is propagated as part of the procedure call. With EDR, events in the source systems explicitly pertain to update events in the data store. These updates need to copied to a target store to allow for load balancing, availability, and recovery, or for real time loading of an analytics system.

Data Integration Patterns 2

- Changed Data Capture (CDC) makes ETL event driven by detecting update events in the source data store and triggering an ETL process based on these updates. One can have more than one listener for different kinds of update events. More advanced systems support complex event processing where the triggering is not caused by single events but by a set of events or non-events in a time window. For example, a flurry of credit card purchases on a card may trigger a fraud detection application.

- Data Virtualization aims to offer a unified data view for application to retrieve and manipulate data without knowing necessarily where the data are stored or structured. This might sit as an abstraction layer above the data integration schemes described so far so that one can have timely access to combinations of federated and consolidated data. Often these systems offer caching, parallization of queries, and query optimization to improve response times.

- Service Oriented Architectures (SOAs) support business processes by a set of loosely coupled software services. Such an intergration suite might provide data service composition tools to allow these services to be combined and aggregated into a new, composite service. Such suites can provide "in-the-cloud computing". These services often involve hardware software and infrastructure provided on demand over a network. Possibilities include:

- Software-as-a-service (SaaS) analytic application, cleansing, reporting etc hosted in the cloud.

- Platform-as-a-service (PaaS) computing platform elements are hosted in the cloud and can be integrated with ones own applications (key-value stores in the cloud like Amazon S3).

- Infrastructure-as-a-service (IaaS) hardware infrastructure offered as virtual machines in the cloud.

- Data-as-a-service (DaaS) data services hosted in the cloud such data quality monitoring, cloud based data integration, etc. allowing one to integrate one's own data with data from external providers

In-Class Exercise

- Consider the problems of keyword advertising on a search engine and product suggestion on a web store.

- Suggest which data integration techniques might be relevant to coding these applications and why.

- Please post your solution to the Nov 18 In-Class Exercise Thread.

Data Services, Data Flows, and Process Integration

- Process integration is the integration and harmonization of business processes in an organization.

- A business process is defined to be a set of activities with a certain order that must be executed to reach a certain organizational goal.

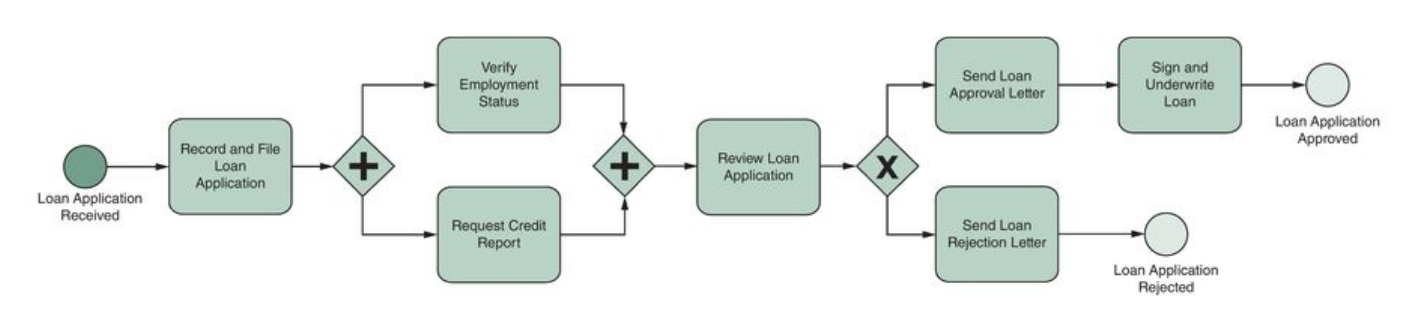

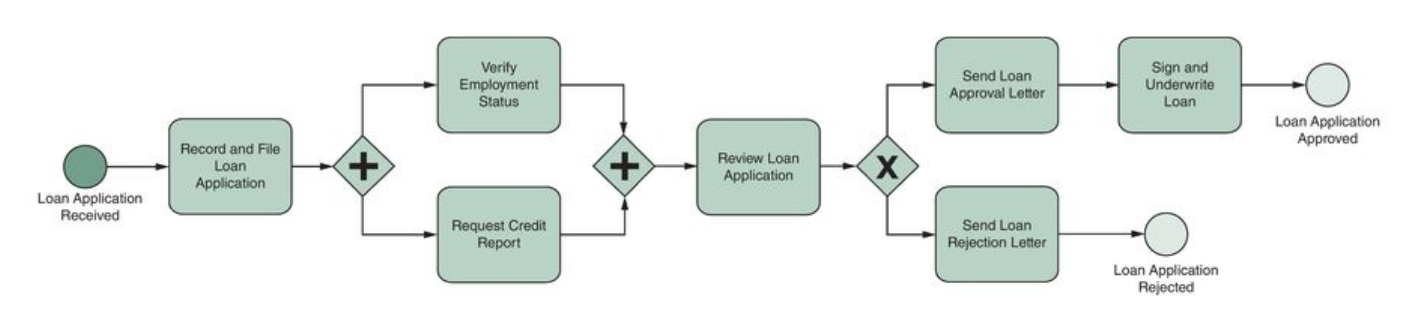

- For example, a loan approval process might consist of tasks such as filing a loan application, calculating a credit score, drafting a loan offer, signing the contract, etc.

- These steps can typically be arranged as a directly acyclic graph which says from where-to-where data flow goes and what data is involved.

- From an implementation perspective in an information system, one often views task coordination/triggering and task execution as intertwined in the same software code.

- From a service-oriented perspective, one tends to separate services whose job is task coordination from services that perform the actual task execution and from services that provide access to the necessary data.

Business Process Integration

- The directed acyclic nature of business process is often modeled using flow chart like tools such as: business process model and notation (BPMN), Yet Another Workflow Language (YAWL), Unified Modeling Language (UML) Activity Diagrams, Event-driven Process Chain (EPC) diagrams, and so on.

- Above we can see one such diagram (BPMN) for the loan approval process.

- Such a process chart can be used as-is by workers in an ad hoc fashion or it can be used to drive steps of an information system, aiding in the automation of the process at hand.

- Such an automation application is called a process engine.

- These systems are coded using a sequence of declarative steps often in a (Prolog-like) language such as WS-BPEL (Business Process Execution Language).

- There are a number of design patterns used to develop processes for such systems which we will describe next day.