Enterprise Storage, Business Continuity, Data Warehousing

CS257

Chris Pollett

Nov 4, 2020

CS257

Chris Pollett

Nov 4, 2020

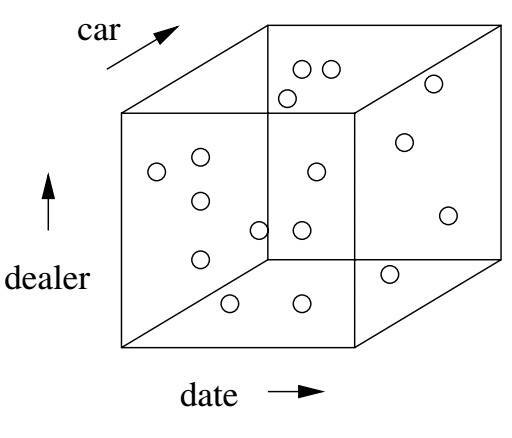

Sales(serialNo, date, dealer, price) Autos(serialNo, model, color) Dealers(name, city, state, phone)

SELECT state, AVG(price) FROM Sales, Dealers WHERE Sales.dealer = Dealers.name AND date >= '2006-1-04';