Outline

- Slidy

- Introduction to Deep Learning

- Quiz

- What is Learning?

Using Slidy

- The slides for this class are HTML files which both validate as HTML 5 and pass the WAVE Accessibility Checker.

- They are made to look like slides using a Javascript called Slidy.

- The following keystrokes do useful things in Slidy:

- h - help (see all the commands)

- f - fullscreen (gets rid of the links at the bottom of the window

- space - advance a slide

- left/right arrows - forward or back a slide

- up/down arrows - scroll within a slide

- a - show all slides at once for printing

Introduction to Deep Learning

- Deep learning is a branch of machine learning that focuses on learning algorithms that allow machines to understand the world through a hierarchy of intermediate learned concepts.

- It has been given various trendy names in the past such as cybernetics (1940-1960s) and connectionism (1980-1990s) with the term deep learning arising in the mid-2000s.

AI without Deep Learning

- Many successes in artificial intelligence (AI) such as Deep Blue's beating world chess champ Gary Kasporov in 1997 did not involve learning intermediate representations. In Deep Blue, a brute force algorithm was used together with a large table lookup of end games.

- Early attempts at giving machines an understanding of the world such as Cyc (Lenat and Guha 1989) involved hand-coding intermediate concepts in a knowledge base.

- Simple machine learning algorithms such as logistic regression (curve fitting where the dependent variable has values 0 or 1) or naive Bayes (estimating the conditional probability of something based on the Baye's formula from probability) allow one to automatically learn a value or make a classification based on hand-coded features.

- These techniques have shown success in problems such as whether to perform cesarean section or not (Mor-Yosef, 1990) and in spam versus no spam email classification.

- They do not involve learning what features are important.

Features and Depth of Learning models

- Learning good features/a good representation is important for many problems. For example, if one doesn't having a counting board or abacus at hand, it is much easier to multiply if we use the Arabic numeral representation of numbers rather than Roman numerals representation.

- The sophistication of a network of representations or features can be measured in couple of ways:

- We can look at the number of sequential (non-parallelizable) operations that need to be executed in order to evaluate the architecture.

- We can look at the representations needed for a learning model as nodes in a directed graph where an edge indicates a representation depending on another, then the longest path (may involve cycle) that needs to be executed in the graph to evaluate the model represents the depth of the model.

- There is no hard and fast rule as to when an architecture/model becomes deep, but we'll usually assume the model has depth at least 3.

- Two common approaches to learn new features/representations are artificial neural networks and deep probabilistic networks.

- This semester we will focus on artificial neural networks.

Artificial Neurons

- Humans seem capable of learning intermediate representation, so it is little surprise that one approach to getting machines to learn such representations is to mimic what humans do.

- It has been known since Santiago Ramon y Cajal in the 1880s that the basic unit of computation in the nervous system and the brain is the neuron.

- In the cybernetics era, McCulloch and Pitts in 1943 proposed a computational model for a neuron and formulated it in terms of the mathematical logic of Russell and Whitehead 1910 (the book that takes a few hundred pages to prove `1+1 = 2`). They optimistically hoped that since neurons could be expressed in terms of mathematics that theorems about psychiatry would follow.

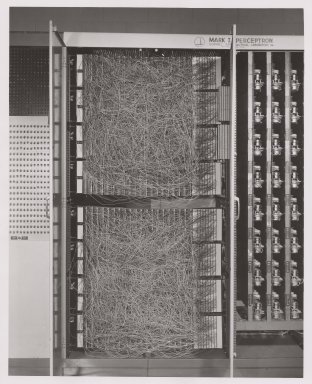

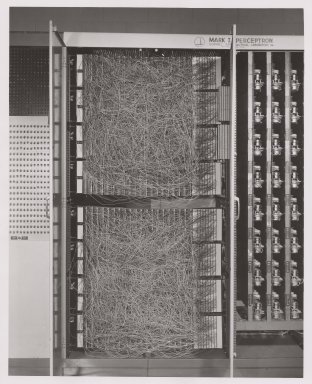

- Rosenblatt in two papers in 1958, 1962 gave the simpler perceptron model of a neuron, as well as the first learning algorithm for it. The image above shows the custom hardware he used to implement a perceptron to recognize simple letters images (like X).

- At about the same time, Widrow and Hoff 1960 proposed the ADALINE (adaptive linear element) neural learning algorithm.

- Minsky and Papert 1969 showed that one layer of perceptrons is insufficient to learn to the parity function based on training examples.

Artificial Neural Networks and Representation Learning

- This killed off a lot of research on neural networks until three advances:

- In the connectionism era, Rumelhart, et al (1986) developed and popularized the backprogation algorithm.

- Models of recurrent networks were developed by Hochreiter (1991), Bengio et al (1994), and Hochreiter and Schmidhuber (1997).

- LeCun et al (1998) inspired by the visual system in the brain showed how to reduce the number of weights needed to recognize features and developed the first multi-layer, deep neural network for handwriting recognition.

- Lack of fast enough hardware and lack of data sets of sufficient size prevented this deep neural network approach from advancing until the mid-2000s.

Deep Neural Network Era

- In the mean time other approaches to machine learning such as support vector machines (SVMs) became popular. These use the kernel trick (basically, a hand-coded "good representation") to avoid the limitations of single perceptrons.

- By the mid-2000s, the deep learning era began as both hardware and data set size caught up, so that deep neural networks could be trained in a reasonable time.

- Whereas LeCun net might have involved thousands of neurons, the largest trained nets as of 2014 (GoogleNet) have around 10 million neurons. The number of connections between neurons in GoogleNet is on the order of a few hundred.

- The MNIST data set used by LeCun et al had around 100,000 items, whereas, the 2011 street view house number dataset has around a million items.

- Trained deep neural networks have been used to halve the rate of errors in computer models of speech recognition (Dahl 2010), to improvement in image recognition (Krizhevsky, 2010), and pedestrian detection (Seramet et al 2013).

- Trained deep neural networks have led to networks that have super human performance for a variety of tasks: traffic sign classification (Ciresan et al 2012), Atari video game playing (Mnih et al 2015).

Let's learn about how to build deep neural networks!

- This semester we are going to learn how to design and train multi-layer neural networks to solve interesting problems.

- We will learn how to code neural networks, but also we will learn why neural networks and particular arrangements of such networks are a useful model for learning.

- To start I want to discuss what is learning?

- I also want to discuss why neural networks are a good fit for many learning tasks (Wednesday).

- As I do this, I will try to review some of the math we will need this semester.