Outline

- More Physiology and Perception

- Geometric Models

- In-Class Exercise

Introduction

- On Monday, we finished our survey of VR Hardware and VR Software.

- We then started talking about how physiology and perception affects the

creation of VR systems.

- We talked about how the study of optical illusions shows that the mind carries

out complex calculations in order to figure out depth, color, etc.

- In the real world these computations require energy, and so contravening usual visual expectations

of the mind can make a VR user tired.

- We gave a classification of the senses, and talked about the kind of receptors for each sense.

- We looked at the size of the brains of various animals as well as the total number of synaptic

connections that human brains have.

- Finally, we talked about hierarchical processing in the brain from lower level feature detection to higher level feature detection.

- We start today by talking about one additional kind of sense, discuss sense fusion, and psychophysics.

Proprioception

- In addition to the standard senses and memory, humans also have a sense of proprioception.

- Proprioception is the ability to sense the relative positions of parts of our bodies and the amount of muscular effort involved in moving them.

- In the engineering setting, it corresponds to sensors in robots called encoders on joints or wheels that indicate how far they have moved.

- The motor cortex in the human brain controls body motion.

- It sends signals called efference copies to other parts of the brain to indicate what motions have occurred.

- Proprioception can be used to explain phenomena like why you cannot tickle yourself -- part of tickling involves not having efference copies of where a touch is about to occur.

- How the sense of proprioception is determined and interpreted by the brain has been worked out by brain studies of things like phantom limb phenomena and illusions related to proprioception like the Pinocchio illusion.

Fusion of Senses

- Signals from our senses including proprioception are constantly being processed and combined with our experiences by our neural structures throughout our lives.

- Interfering with the standard process of this fusion is likely to cause a mismatch between our senses and the data stored in the brain.

- The brain reacts to these mismatches in a variety of ways:

- If the brain is not consciously aware of the mismatch, it may cause fatigue, headache, dizziness, or nausea.

- If the brain is consciously aware of the mismatch, one becomes aware that the experience is artificial. This corresponds to a case where the VR experience fails to convince the user that they are present in a virtual world.

- To avoid mismatches, and make an effective and comfortable VR experience, it is essential to do human trials.

- One source of mismatches in the VR setting is vection, which is the illusion of self-motion.

- This can be caused when the visual inputs are suggesting an accelerating motion, but your balance sense reports you are motionless.

- Later in the semester, we will discuss strategies to avoid vection such as: reducing field of view, raising the perspective, reducing the time of the mismatch, and reducing scene complexity to speed up refresh rates.

Sensory Adaptation

- Sensory adaptation is when the perceived effect of a stimuli changes over time.

- For example, the perceived loudness of a motor the longer you are exposed to it might decrease.

- Over longer periods of time, perceptual training can lead to adaptation.

- A person who has been exposed to a first person VR environment for a longer period of time tends to experience less vection than one who is exposed less.

- This means that when one tests a VR Environment one shouldn't test it on someone already experienced with it or similar VR environments. I.e., test on people other than VR developers.

- On the other hand, training allows a developer to see flaws in a VR environment that might be otherwise unobserved by an "untrained eye."

- Some examples of things better detected with experience are:

- Tracking latency that is interfering with the perception of stationarity.

- Left-right eye swap.

- Objects shown to one eye, but not the other.

- One eye receiving inputs with more latency then the other.

- Straight lines being curved caused by uncorrected warping in the optical system.

Psychophysics

- Psychophysics is the scientific study of perceptual phenomena that are produced by physical stimuli.

- For example, under what conditions would someone call an object red, sour, or ticklish?

- When would someone call one thing straighter, louder, larger than another?

- As a sensory parameter is varied, such as the frequency of light, there is usually a range of values for which subjects cannot reliably classify the phenomena.

- For example, there are a range of values for which subjects cannot distinguish colors such as red. This can also vary by type of participant for reasons such as tetrachromacy.

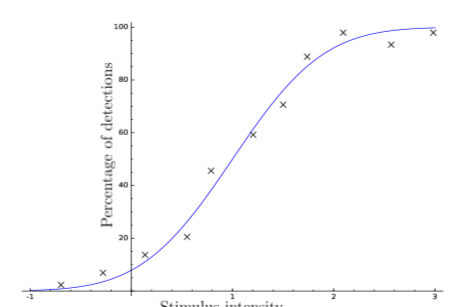

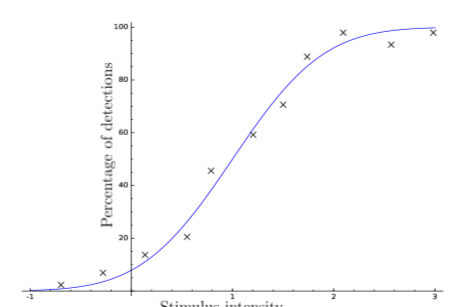

- Measuring the consistency with which a physical phenomena can be detected, yields a psychometric function such as the curve above.

- We will later consider how to design such tests in the VR setting.

Steven's Power Law

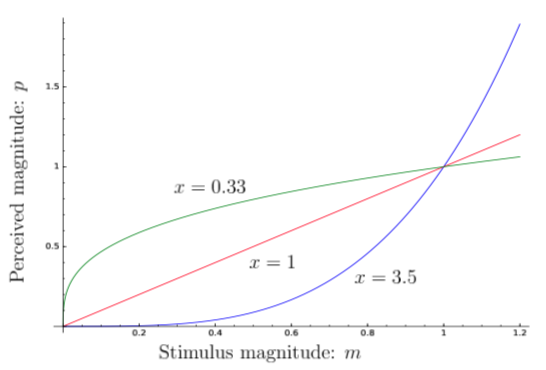

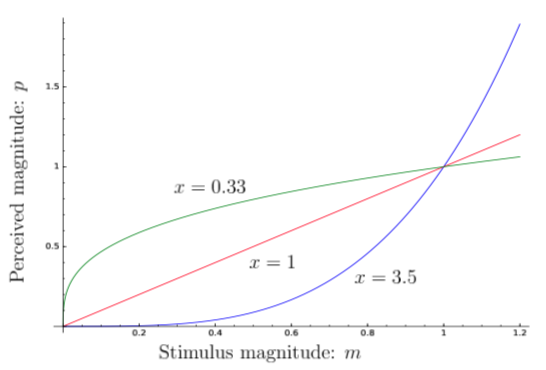

- Steven's power law is a famous result from psychophysics which characterizes the magnitude of a physical stimulus versus its perceived magnitude.

- According to the law, this relationship is:

`p=cm^x`

where `m` is the magnitude or intensity of the stimulus, `p` is its perceived magnitude, and `c` and `x` are constants (`x`, usually being more influential).

- The value of `c` and `x` vary depending on the kind of stimulus. For example, for an electric shock `x` might be larger than for something like brightness.

- Only when `x=1` and `c=1` does perception change exactly like reality.

Just Noticeable Difference

- Just Noticeable Difference (JND) is the amount that a stimulus needs to be changed so that subjects would perceive it to have changed in at least 50% of trials.

- Some kinds of stimulus such as brightness have been very well studied and JND has been quantified in an equation.

- For brightness, there is an experimentally determined law, called Weber's Law, which says:

`(\Delta m)/m =c`,

the JND brightness, `\Delta m`, at a given magnitude stimulus `m`, is a constant `c`.

The Geometry of Virtual Worlds

- Over the last week, we have been discussing Virtual World Generators (VWGs). These as part of their functions maintain the geometry and physics of a virtual world.

- We now cover the geometry aspect of this.

- This includes how to describe models which we may put in our scenes such as buildings, furniture,etc and the mathematical transformations needed to manipulate them.

- We are also going to discuss scene rendering.

- It is useful to learn these things so that we can develop VR environments in a more low level and robust way.

Geometric Models

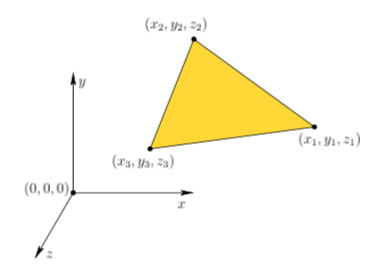

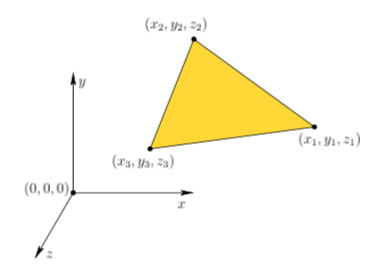

- Typically, a VR environment can be imagined as existing in some three dimensional space, with points `(x,y,z)` taken from `RR^3`.

- In many setting (the book, in physics) such triples are plotted using a right-handed coordinate system such as in the diagram above, where the arrows indicate the direction of increasing `x`, `y`, or `z` value.

- In computer graphics, a left-handed coordinate system where `z` points in the opposite direction is often used.

- Geometric models are surfaces or solid regions in `RR^3`.

- Since a model may contain infinitely many points, it is usually defined in terms of primitives which can be specified in a finite way.

- The 3D triangle is perhaps the simplest such primitive.

- A planar surface patch that corresponds to all points "inside" and on the boundary of a triangle can be fully specified by coordinates of the triangle vertices:

`((x_1, y_1, z_1)`, `(x_2, y_2 ,z_2)`, `(x_3, y_3, z_3)).`

In-Class Exercise

- Come up with an algorithm to determine if a point is on the boundary of a triangle.

- Come up with an algorithm to determine if a point is in the interior of a 2D triangle.

- Post your solutions to the Feb 13 In-Class Exercise Thread.

Meshes

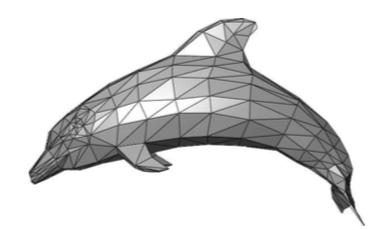

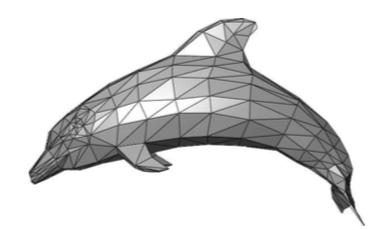

- To model a complicated object or body in the virtual world, numerous triangles can be arranged into a mesh, such as the dolphin figure above.

- Some important questions related to meshes are:

- How do we specify how each triangle "looks" whenever viewed by a user in VR?

- How do we make the object "move"?

- If the object surface is sharply curved, then should we use curved primitives rather than triangle approximations?

- Is the interior of the object part of the model, or does the object only consist of its surface?

- Is there an efficient algorithm for determining which triangles are adjacent to a given triangle along the surface?

- Should we avoid duplicating vertex coordinates that are common to many neighboring triangles?