Outline

- Integrated Services

- In-Class Exercise

- Differentiated Services

Introduction

- On Monday, we began talking about Quality of Service (QoS).

- We said applications that are sensitive timeliness of data (such as audio or video apps) are called real-time applications.

- To handle real-time applications, we needed a new service model, where services that need stronger assurances about how the network will perform can ask the network for them. Such a network can then respond with the level of assurance it can promise to provide.

- In such a model, the network will treat some packets differently than others. We said a network which supports different levels of services is said to support quality of service (QoS).

- We looked at different types of applications with respect to service needed:

- elastic (stretch gracefully in the face of increased delay) versus real-time,

- intolerant (to occasional data delay) versus tolerant,

- nonadaptive (to change in network service) versus adaptive, and finally,

- rate adaptive (can handle changes in bps by doing things like reduce image quality) versus delay-adaptive (handles change in network circumstance by using a larger buffer).

- We distinguished fine-grained QoS approaches, which provide QoS to individual applications (example, Integrated Services). and coarse-grained approaches, which provide QoS to large classes of data or aggregated data (example, Differentiated Services).

- We said that Integrated Services (aka IntServ) refers to a body of work produced by the IETF around 1995-1997.

- It consists of specifications for a number of service classes for different common application types as well as RSVP which is used to make reservations for these service classes.

- We begin today by looking at this in more detail.

Service Classes

- The basic split in service classes in Integrated Services is between tolerant and intolerant services:

- The guaranteed service class guarantees the maximum delay that a packet will experience is at most a fixed, specified value. Early arrival for the guaranteed service is handled by buffering.

- The controlled load service is used for tolerant adaptive applications. It tries to emulate a lightly loaded network for applications requesting the service, even though the

network as whole might be heavily loaded. To do this, it uses a queuing mechanism such as weighted fair queuing (WFQ) to isolate the controlled traffic from the other traffic. It also uses some form of admission control to limit the total amount of controlled traffic on a link.

- Let's look at the mechanisms used to implement these classes...

Mechanisms to Implement Service Classes

- To say we want a given service class we need mechanisms for an application to tell the network qualitative information such as "use a controlled load service" or "I need a maximum delay of 100ms."

- This information we provide the network about the desired network requirements is called the flowspec.

- Given a flowspec, the network needs to determine if it can provide that service class. For example, if each of 10 users asks for 2Mbps of consistent link capacity, but the network only has 10Mbps overall, then the network is not able to satisfy everyone's request.

- The process of deciding when to say no is called admission control.

- The communication between the users of a network and the components of the network about flowspec requests and admission control decisions is called signaling or resource reservation.

- The management of the way packets are queued and scheduled for transmission in routers and switches to meet flow requirements is called packet scheduling.

- We now look at more of the details of flowspecs, admission control, resource reservation, and packet scheduling...

Flowspecs

- Flowspec is specified by specifying:

- the flow's traffic characteristics (TSpec).

- the service requested from the network (RSpec).

- The setting of RSpec is service specific. To request a controlled load service, the application just requests controlled load service.

For a guaranteed service, you could specify a delay target or bound.

- For TSpec, the application needs to give enough information about the bandwidth used by the flow to allow intelligent admission control decisions to be made.

- One way to describe the bandwidth characteristics is to specify a token bucket filter.

- A token bucket filter has two parameters: the token rate `r` and the bucket depth `B`.

- If such a filter is in use, to send a byte, one needs a token; to send `n` bytes, one needs `n` tokens. The system starts with 0 tokens and accumulates r tokens/second. The bucket is allowed

a maximum of `B` tokens (the depth).

- If a flow's traffic were governed by such a filter, it could send bursts of `B` bytes into the network as fast as it wants, but its average rate of sending over sufficiently long intervals is `r`.

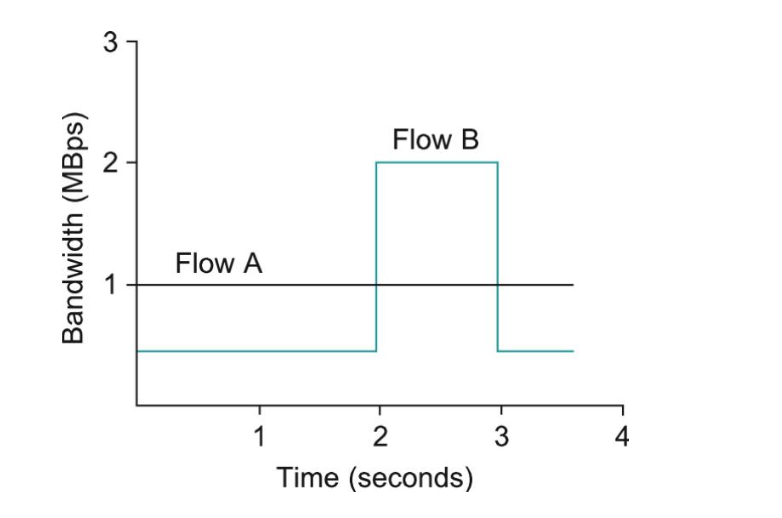

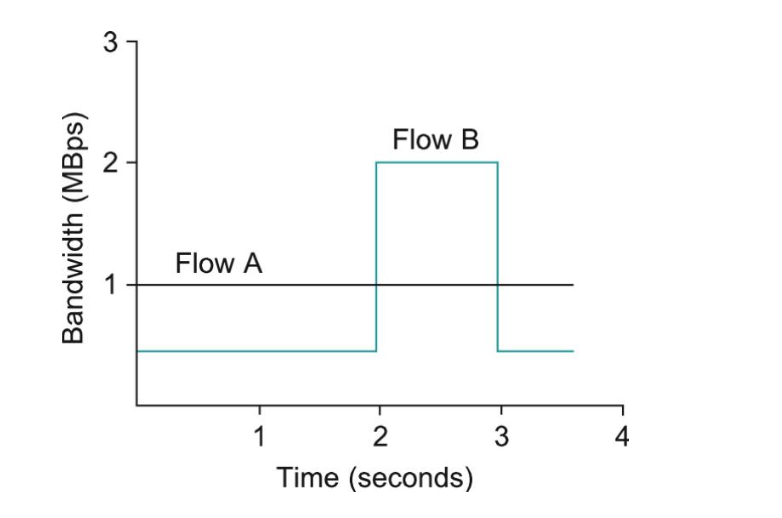

- As an example, in the above image, Flow A (black line) is has a rate of 1 MBps and a bucket depth of 1 token.

- Flow B (cyan) also sends on average at 1MBps, by send at 0.5MBps for 2seconds and 2MBps for 1 second.

- In order to do this, it needs a bucket depth of at least 1 million tokens so that it can accumulate 1M tokens during the first 2 seconds where it is transmitting at 0.5MBps.

In-Class Exercise

- Suppose we have a sawtooth pattern flow: At time `t=0` the data rate is 1MBps and this linearly increases to 3MBps by time `t=1`. The flow rate at `t=1` then drops back to `1MBps` and the

pattern repeats.

- What is the average rate of this flow?

- What would be a sufficient bucket depth in tokens needed to handle it?

- Please post your solutions to the Apr 26 In-Class Exercise Thread.

Admission Control

- Admission control looks at the RSpec and TSpec and tries to decide if the desired service can be provided, given the currently available resources, without causing any previouslly admitted flow to receive worse service than it requested.

- For guaranteed service, it is possible to have fixed, good algorithms (especially if weighted fair queuing is used) to make a yes/no decisions.

- For controlled load services, the decision is often based on heuristics such as "the last time I allowed a flow with this TSpec, the delays for the class exceeded the acceptable bound, so I'd better say no" or "my current delays are so far inside the bounds that I should be able to admit another flow without difficulty."

- Admission control is not policing. The former just makes per flow decisions about admitting flows, the latter makes per-packet decisions on whether the flow conforms to the TSpec of the reservation.

- If a flow does not conform to the TSpec (say is sending twice the bytes said would), then a policing policy might drop packets, or it might check if the higher rate is interfering with other traffic, and if not, allow the packet to be forwarded.

- In general, admission control is closely related to policy:

- A network admin might wish to allow traffic reservations from a CEO, while disallowing the same kinds of reservations from lowly employee plebs (a policy decision).

- On the other hand, the CEO's request still might fail if the requested resources are not available (an admission control decision).

Resource Reservation

- By and large, the internet is a connectionless network. I.e., we don't usually set up virtual circuits to end hosts.

- To ensure the quality of service needed for real-time applications, we need to provide a lot more information to our network.

- RSVP tries to do this while still maintaining the robustness to crash and reboots of router, switches, etc. of the underlying connectionless Internet.

- To do this, RSVP uses the idea of a soft state in a router with regard to a flow. Unlike a hard state, such a state does not need to be explicitly deleted (as might need to be done for a virtual circuit). Instead, it times out after some fairly short period if it is not periodically refreshed.

- RSVP supports multicast flows where each receiver, not the sender globally, specifies it own requirements. It does the same for unicast flows (but in that case there is only one receiver). This is called a receiver-oriented approach to QoS.

- Each receiver periodically sends refresh messages to keep the soft state in place, and can also ask for a new level of resources.

- In the event of a host crash, resources allocated by that host will naturally timeout and be released.

Receiver Making a Reservation

- Two things need to happen for a receiver to make a reservation:

- The receiver needs to know what traffic the sender is likely to send so that it can make an appropriate reservation (sender's TSpec).

- The path the packets will follow from sender to receiver, so that it can establish a resource reservation at each router on the path.

- To accomplish both of these, the sender can just send a message (called a PATH message) to the receiver with the TSpec.

- So the receiver will get the TSpec. Also, along the path the message takes, each router determines the reverse path needed to send a reservation from the receiver back to

the sender.

- The receiver then sends a RESV (reservation) message back up the multicast tree. This message contains the sender's TSpec and an RSpec, describing the requirements of this receiver.

- Each router along the reverse path looks at the reservation request and tries to allocate the resources needed to satisfy it. If all goes well, the correct allocation is installed at every router between the sender and the receiver. If not, an error message is returned to the receiver making the request.

- Then for as long as the receiver wants to retain the reservation, it sends the same RESV message about once every 30 seconds.

Router or Link Failure under RSVP

- In the event of a failure, routing protocols will adapt to the failure and create a new path from the sender to receiver.

- PATH messages are sent about every 30 seconds and may be sent sooner if a router detects a change in its forwarding table.

- So the first PATH message after the new route stabilizes will reach the receiver with this new path.

- The receiver's next RESV message will follow the new path, and if all goes well, establish a new reservation along this path.

- The routers no longer along the path will stop getting RESV messages, and so the associated reservations will timeout and be released.

More on Reservations and Multicast

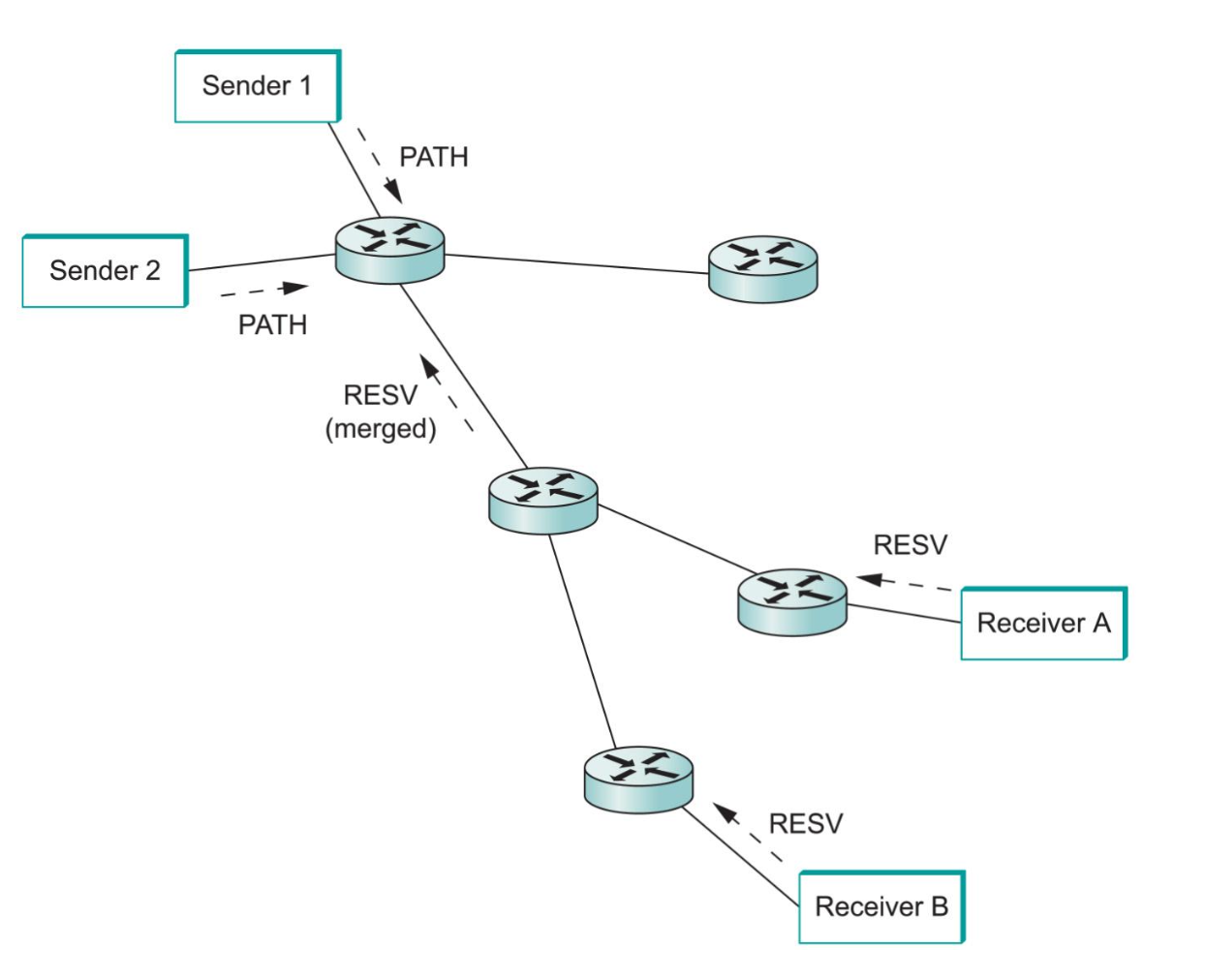

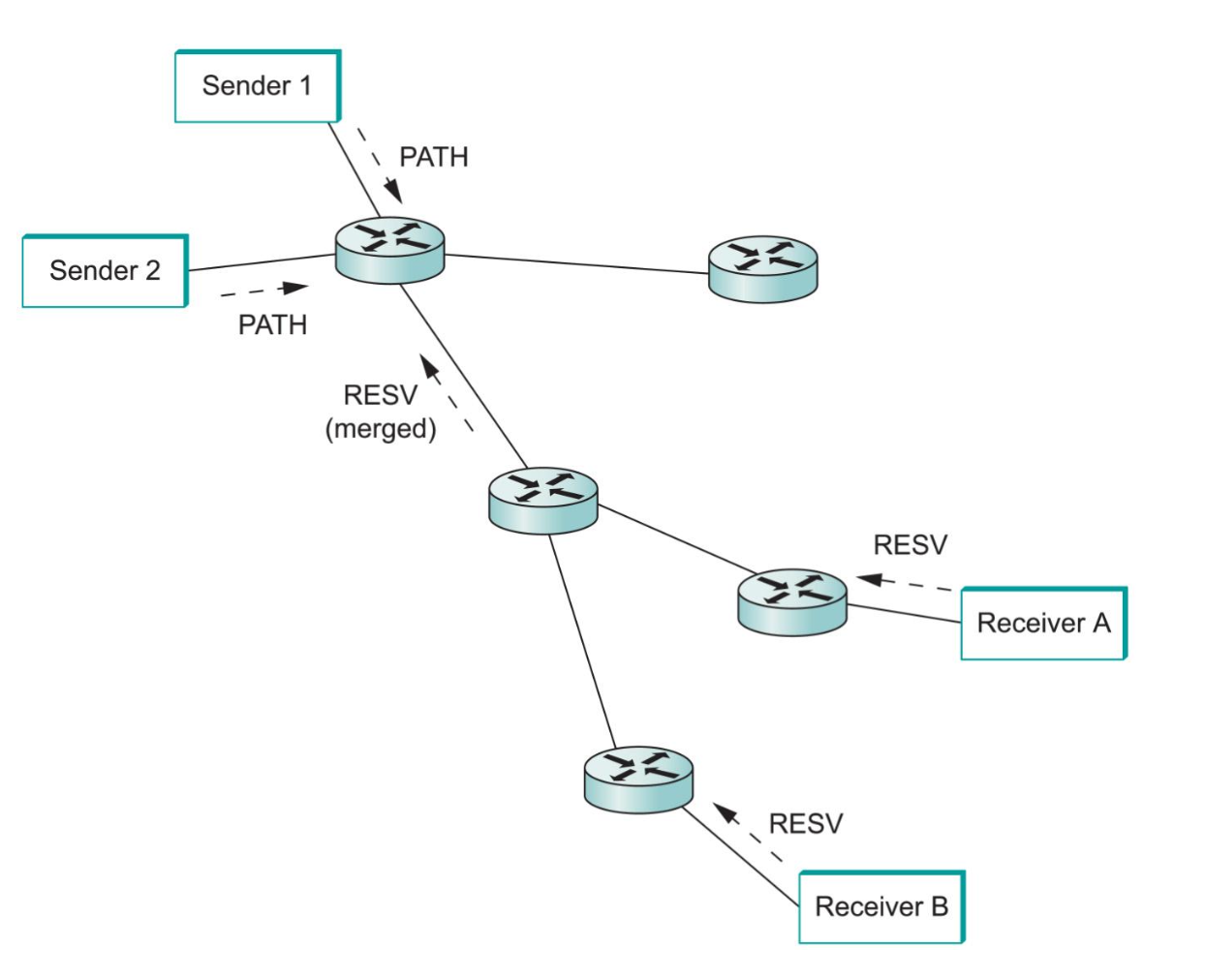

- The above diagram shows what can happen with RSVP when we have multiple receivers and senders.

- When we have multiple receivers, as an RESV message travels up the multicast tree, it is likely to hit nodes where some other receiver's reservation has already been established.

- It may be the case that the resources reserved upstream are already adequate to serve both receivers.

- For example, if A made a reservation asking for a guaranteed delay of less than 100ms and B asked for a delay of less than 200ms, then no new reservation is required.

- On the other hand, if B asked for a delay of 50ms, then the router first needs to check if it can accept the request, and if so, it would send the request upstream.

- The next time A made a reservation for 100ms, the router would not need to pass its reservation on, as an at-most 50ms delay had already been reserved.

- If there are multiple senders in the tree, receivers need to collect the TSpecs from all senders and make a reservation large enough to accommodate the traffic from all senders.

- This might not mean the TSpecs have to be added up. For example, in an audience call with 10 speakers, you don't need to allocate enough resources to carry 10 audio streams, since the result of 10 people speaking at once would be incomprehensible anyway. Instead, you just need to allocate enough to accommodate at most two speakers at once.

- This example illustrates that to determine how TSpecs should be added up is application specific.

Packet Classifying and Scheduling

- For routers to actually deliver the requested service to the data packets arriving along a reservation path two things must be done:

- Associate each packet with the appropriate reservation so that it can be handled correctly. This is called packet classification.

- Manage the packets in the queues so that they receive the service that had been requested. This is called scheduling.

- To do the first part, a router examines the five fields: source address, destination address, protocol number, source port, and desctination port. (In IPv6, it might be the case that the FlowLabel field can be used to do a lookup on a single, shorter key).

- Using this, the packet is placed in the appropriate class.

- After classification, the details of packet scheduling is not specified by the service model. This is so different implementors can do creative things to try to realize the service model more efficiently.

- For guaranteed services, it is common to put them into some kind of weighted fair queuing scheme.

- Each flow would get its own queue with a certain shared of the link so as to provide an end-to-end delay bound that can be calculated.

- For controlled loads, simpler schemes are often used.

- For example, treat all controlled load traffic as a single aggregated flow with the weight for that flow in the weighted fair queue being set based on the total amount of traffic admitted as controlled flows.

Scalability Issues

- Many service providers are hesitant to deploy Integrated Service because of scalability issues.

- In the best-effort service model, routers store little or no state about particular flows.

- To deal with a larger internet, a router needs to deal with potentially more traffic and maybe a larger routing table of where to send stuff.

- If we use RSVP, then we also might need to have entries for every flow passing through a router.

- For example, suppose every flow on an OC-48 (2.5Gbps) link represents a 64-kbps audio stream.

- The number of such flows is:

`(2.5 times 10^9)/(64 times 10^3) = 39000`.

- Each such reservation needs some amount of state stored in memory and periodically refreshed.

- The router needs to classify, police, and queue each of those flows, make admission control decisions, etc.

- So if look at flows individually might have scalability issues, so instead we could try to group together flows. We next look at one such approach.

Differentiated Services (EF, AF)

- The Differentiated Service model allocates resources to a small number of classes of traffic rather than at the flow level.

- In the simplest set-up, one could imagine have "regular traffic" and "premium traffic."

- Rather than using a protocol like RSVP to tell all the routers that some flow is premium, one could let packets identify themselves as premium using a bit

in the packet header (a 1 indicating premium).

- Two questions with this scheme need to be addressed:

- Who sets the premium bit and under what circumstances?

- What does a router do differently when it sees a packet with the bit set?

- A common approach to the first problem is to set the bit at an administrative boundary.

- For example, the router at the edge of an ISP's network might set the bit for packets arriving on an interface that connects to a particular company's network. It might do this because that company paid for a higher level of service.

- Even for that company not all traffic might be marked as premium, one might set a maximum rate at which or total number of packets to the company that are marked premium and additional packets are then grouped in with the rest.

- Assuming packets have been marked in some way, the IETF has standardized a set of router behaviors to be applied to marked packets called per-hop behaviours (PHBs).

- Because there is more than one new behavior in this spec, more than one bit is needed to tell a given router which one to apply.

- The IETF repurposed the old TOS byte in the IP header for these bits. Six bits are used for a DiffServ code points (DSCPs). Each 6-bit code point identifies a particular PHB to be applied to the packet. The remaining two bits in the TOS are used for the ECN mechanism we described earlier.

The Expedited Forwarding PHB

- A packet marked with the Expedited Forwarding (EF) PHB indicates to a router that it should be forwarded by the packet with minimal delay and loss.

- For the router to guarantee this, the arrival rate of EF packets to a router must be less than the rate at which the router can forward EF packets.

- For example, a 100Mbps interface needs to be sure that the arrival rate of EF packets destined for that interface never exceeds 100Mbps.

- It actually wants a little below this so that it occasionally has time to send other packets such as routing updates.

- Rate limiting of EF packets is achieved by configuring the routers at the edge of an administrative domain to allow a certain maximum rate of EF packet arrivals to the domain (usually the bandwidth of the slowest link in the domain).

- This ensures that even in the worst case where all EF packets converge on the slowest link, that link is not overloaded and can forward the EF packet correctly.

- There are several possible implementations of EF behavior: For example, give EF packets strict priority over all other packets, another is to use a WFQ and set the weight of EF packets sufficiently high so that all EF packets can be delivered quickly.