Project Blog

Week 15 - May 10th 2022

Discussed the the tentative slides I had made for my oral defense. Chris showed me the room and

described the procedures that I would have to go through during and after my oral defense. He

emphasized that I also increase the size of the font of my tables and add diagrams of all my

ML models. I still have not filled out some slides and they will have to be done by the 18th.

Things to do for this week:

- Finish slides for presentation

- Finish up any last minute experiments

Week 14 - May 3rd 2022

We went over the rough draft of my report and talked about upcoming tasks that need to be done

in order to pass CS298. I also asked him about graduation details since I missed the deadline to

send in forms to apply for graduation this quarter.

Things to do for this week:

- Email Mark and Robert to find times where they can attend my defense.

- Submit the report to turnitin.com link

- Fill out defense form request

- Make slides for presentation

- Make changes to the paper that Chris marked up

Week 13 - April 26th 2022

Discussed the relevance of results of the experiments that I completed and how to interpret them.

Random noise was applying in larger and larger amounts, however the more noise that was added,

the more galaxy classifications where guessed. This means the galaxy sensor is more noisy. Also

confirms the noise multiplier trend extends all the way down and 0.3 multiplier.

Try to add a recipe section where I give advice on what people should do to prevent classifiers

from guessing correctly. Give advice on best way to screw up a classifier without harming image.

Things to do for this week:

- Finish report

- Try to implement cross validation

- Try to add noise to training set to increase robustness of the model

- Make simple CNN classifier

Week 12 - April 19th 2022

I first showed Chris the results of using the classifying model to classify the images using

different denoising methods. Using the denoise_tv_chambolle method from skimage game me results

closest to a 50-50 split without being too biased towards one camera model. This contrasts with

the results that Lucas got because he claimed that wavelet based denoising methods works the best.

I do make the assumption that the best denoising methods would results in a classifier resulting in

a 50-50 split. I also showed him the results of weighting the noise before addition onto cropped

images for spoofing. I found that there is a trend with weights that are smaller (< 1.0) producing

better results for being able to spoof with iphone noise. The opposite is true with galaxy noise

imprinting classifying more correctly with values > 1.0. I still need to test more to find where

where is the optimal values for each class. I should have also mention in the meeting that I handle

the loss of precision when converting floats to integers when saving images by rounding and not

truncation. I also save the resulting images as pngs to not further compress the image with the jpg format more than what is necessary.

The only paper that I have seen that actually explains how they denoise their images is the original

paper on noise residuals by Lucas. He uses a wavelab package and modifies it. Hopefully I can access

that package. Many other papers either start with a synthetic image and don't need to denoise as

synthetic images don't go through a digital imaging pipeline that imprints noise. There are also

papers that just classify images and don't even bother denoising. Professor Pollet also mention that

since most state of the art classifiers are CNNs, I should try to have a CNN for a classifier.

Things to do for this week:

- Try cross validation on data.

- Add random noise onto iphone and galaxy test images until I achieve a 50-50 split if I can't achieve a 50-50 split, I will need to explain why. I bet $1 that the random noise on the will eventually converge to a point where the images will be classified as galaxy8c more.

- Add random noise to the training set of the classifier to see if I can improve the results and make the model more robust.

- GET WRITING THE REPORT!

- Bring Iphone alpha injection lower until accuracy goes down to find maximum alpha value for optimum accuracy.

- Test Galaxy8L images to see if model can differentiate between phones ids

- Remind Chris to look up ACGANs before next meeting

- Make simple CNN model for classification

- Test on images made up of random pixels

- Check out wavelab url that Lucas paper mentioned.

Week 11 - April 12th 2022

After generating enough crops to conduct all the experiments. I discovered that

even the base test was having low accuracy. Usually extremely leaning towards

predicting one model over the other. I tried precropping the training set,

saving the crops as pngs, and using other classifying models. However,

I finally discovered that i accidentally let an old variable that cropped the

test images to a larger size than they should be. The result was the model

predicting on test images with large black borders shown below.

After fixing that bug. the results for experiment 1 were much better. Since I

have high accuracy for training and validation. Maybe my classifier is

overfitting the data now. Or maybe the GAN is overfitting now.

Experiment 2 shows that my denoising method does not successfully remove the

camera model noise since the classifier can still detect the correct model at

the same accuracy as the Exp 1. Professor Pollett suggested that I try adding

random noise to the test croppings at different weights in an effort to obfuscate the camera model noise

then once the model is fooled sufficiently I can add on the noise print for

spoofing.

I should also look at other research papers to find out how others

did their denoising. He also mentioned I can use luminance to scale individual pixels,

but I do want my denoising method to be scene invariant so I won't do it. I will also try to

fine tune the GAN after Exp 2 gets 50-50 results. I could also use the other galaxy8

phone on the classifier to see if the model currently can distinguish between phones ids.

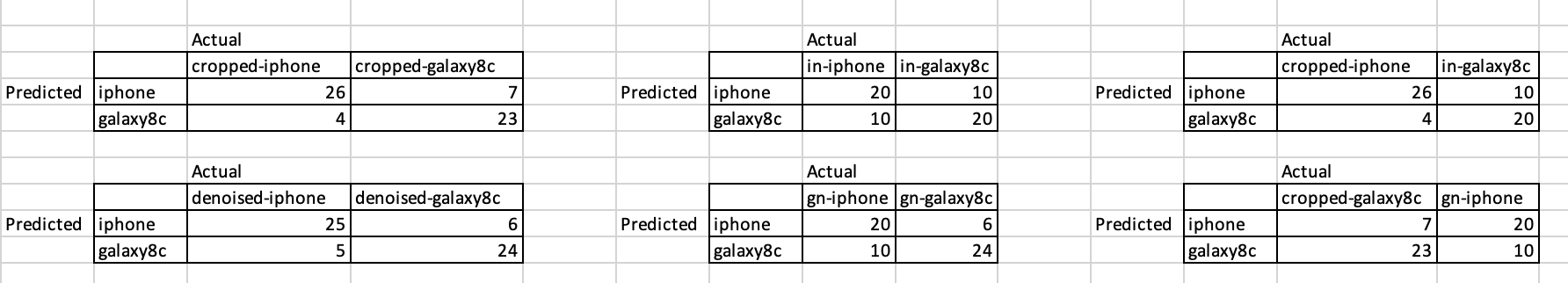

Experiment results:

Things to do for this week:

- Find a better method for denoising images

- Conducted the random noise experiment

- Start writing the report

Week 10 - April 5th 2022

I was having issues with the test set being heavily favored towards classifying images as iphone

regardless of noise. I explained that the model trains on 5 croppings of a high resolution image

(3024x4032). While the test set just takes 5 croppings (which end up being the whole image) of a

128x128 image. This is due to the GAN only producing 128x128 noise prints. The GAN already takes

20 minutes to train on 240 128x128 image croppings. So, training a single GAN on the whole image

would take 732 times longer (10.2 days) and I need to train one GAN model per camera model. This

is infeasible and Chris told me that I can just make the training set the same as the test set

by doing 5 128x128 croppings of the training set before feeding into the model and just using

the center == true method to avoid the preprocessing taking lh, rb... crops. I also proposed

using the same technique on the images to train the GAN. I will take 5 crops for each image (

maybe in rb, lh... positions or randomly) and use that set to train the GAN. The GAN will end up

having 5x240 = 1200 images to train on. The result will have 128x128 images for testing and

training and manually doing the cropping before data preprocessing.

Chris also mentioned conducting several experiments illustrated in the image below

Experiment order:

1 3 5

2 4 6

Symbols in table are ideal results:

⋀ means a high number

⋁ means a low number

- means they are all equal

in-i: means iphone denoised image with iphone spoofed noise added

in-g: means galaxy denoised image with iphone spoofed noise added

gn-i: means iphone denoised image with galaxy spoofed noise added

gn-g: means galaxy denoised image with galaxy spoofed noise added

For experiments 5 and 6, i and g are original cropped non denoised iphone and galaxy images

Experiment 1: non-spoofed images

Experiment 2: denoised images with no noise spoofing

Experiment 3: iphone noise spoofing on all denoised images from both classes

Experiment 4: galaxy noise spoofing on all denoised images from both classes

Experiment 5: iphone noise spoofed images only for denoised galaxy images

Experiment 6: galaxy noise spoofed images only for denoised iphone images

Can also do same experiments with a different galaxy phone of the same model

Report template:

40 pages of report

1. intro

2. background of the field of study

3. preliminary work to catch the reader up to speed on the technical details of the subject

4. model design

5. implementation of model

6. eperiments - setup - expectations - results (can also mention failed experiments)

7. conclusion

Things to do for this week:

- Work on cropping the datasets for GAN and Classifier

- Get results of the experiments discussed

- Fine tune hyperparameters of models

Week 9 - Mar 29th 2022

Spring break, no meeting

Week 8 - Mar 22nd 2022

Let professor know that I have a current working implementation of a GAN and Classifier. Also mentioned the changes I made to the GAN since last week. I changed the noise prints to be saved as npz files instead of images in order to accurately save the negative noise values since images cannot have negative pixel values. I also removed noise scaling on noise prints. Scaling is useful for discrimination but the generator will not be able to produce noise accurate reconstruction for spoofing.

Also confirmed that I need to have three separate sets of data. One set for training the GAN, another for training the Classifier, and the last set (will include spoofed images from the Generator) will be for testing the classifier with 90/10 training test split.

Could also use images from the same phone model and test to see if I can spoof specific phones even of the same model type. I will probably use two galaxy s8's and iphone X. Some pictures from the dataset will be from the same tripod position but they will probably be included in the testing set.

Original dataset that the classifier used had 275 images per class. So I should also have 275 images per camera model to train the classifier. Maybe GAN can have around 240 images per class.

I can make a graph of Accuracy of spoofed images vs training set size of the classifier to see if there is a sharp decrease in accuracy when the sample size drops beneath a threshold. I may be able to infer something at that stage.

Things to do for this week:

- Progress Update with committee members in mid April

- Create code to tie both models together and obtain results

- Fine tune model to get good results

Week 7 - Mar 15th 2022

Discussed concerns about model run time since the input dimensions are very high if I were to have noise that is RGB and is the full dimensions of the picture. Since noise is thought to be relatively local repeating patterns, Chris suggested that I just use random crops of images and treat them as full size images since most detection models only classify images based off of crops anyways. Chris also mentioned that I need to still update the blog and I need to revise my proposal's timeline so that report is finished by May 1st

Things to do for this week:

- Work on the classifier to score the noise that I've obtained from the generator

- Update Blog and CS298 proposal timeline

- Convert noise to RGB

Week 6 - Mar 8th 2022

Canceled meeting due to lack of significant progression on project.

Week 5 - Mar 1st 2022

Found an article to base my GAN network around:

D. Cozzolino, J. Thies, A. Rossler, M. NieBner and L. Verdoliva, "SpoC: Spoofing Camera Fingerprints," 2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), 2021, pp. 990-1000, doi: 10.1109/CVPRW53098.2021.00110.

Discussed that training size should be approximately the size of someone's phone camera album since,

it would more closely mirror a real life attack. Would try to get two different camera rolls and

spoof one camera's images onto the other.

Things to do for this week:

- Continue to work on the GAN

Week 4 - Feb 22nd 2022

My results with PCA and LBP were not great. I would like to do more extensive testing with the sample size and other hyper parameters, but will have to put that on the backburner, so I can start on trying out GANs.

Things to do for this week:

- Start on making a GAN model for noise spoofing

Week 3 - Feb 15th 2022

Experimented with Local binary pattern image augmentation. Inspired by

G. Xu and Y. Q. Shi, "Camera Model Identification Using Local Binary Patterns," 2012 IEEE International Conference on Multimedia and Expo, 2012, pp. 392-397, doi: 10.1109/ICME.2012.87.

Was able to preprocess data from 5 different crops. LBPs use pixel contrast to detect edges and shapes in windows.

The windows are cleverly assigned numerical values to group certain pixel regions for classification.

Things to do for this week:

- Combine LBP and PCA denoising to see if results improve

Week 2 - Feb 8th 2022

Was able to find two committee members. Had some conflicts with getting permission to take CS298. Had to contact the CS dept to sort things out. Had to make several Docusign links and had to fill out a "Petition for Advancement to Graduate Candidacy" form. Waiting on Response from CS dept

Things to do for this week:

- Make sure any other follow up forms get filled

- Work on PCA based denoising and see if these help improve the accuracy

Week 1 - Feb 1st 2022

Learned about the requirements towards getting permission to sign up for CS298. I have to find two full time professors who are willing to review my report and be on my committee.

Things to do for this week:

- Finish CS298 report

- Email professors that I would like to have as committee members

Week 16 - December 7th 2021

Discussed the next steps to look forward to. I have to start thinking about who I want on my committee.

Things to do for this week:

- Fix the corrections Dr.Pollett commented on in the report

- Fix HTML of pages I created.

Week 15 - November 30th 2021

Pre-Meeting Notes

Was able to complete report up to Dev 3. The other text below that is filler that will be deleted.

Things to do for this week:

- Finish first draft of CS297 report

Week 14 - November 23rd 2021

Pre-Meeting Notes

Followed these notebooks

- CelebA: https://github.com/Yangyangii/GAN-Tutorial

- cifar10: https://github.com/diegoalejogm/gans/blob/master/2.%20DC-GAN%20TensorFlow.ipynb

- minst: https://www.tensorflow.org/tutorials/generative/dcgan

Discussed how a GAN would work to suit my needs to solving this source camera identification model and how to spoof images. Discussed the project report specs.

Things to do for this week:

- Work on Report

- Work more on GAN for Dev 5

- Read and report Ref 5

Week 13 - November 16th 2021

Discussed the research paper that was REF 3. Even though the topic wasn't ideal timing, it was important to see how robust the tests and current algorithms were for noise print detection. The main takeaway from this article was that noiseprints can be completely removed and fool detection models. I need to take this a step further and imprint a fake noise to fool a model to think it's a different class.

Things to do for this week:

- Finish dev4: implement a GAN

Week 12 - November 10th 2021

Discussed the results of the benchmark and the types of tests they did. Discussed the difficulties of getting the benchmark to run. Decided next steps were to start working on getting a GAN working and to get deliverable 3 on the website.

Things to do for this week:

- Get Deliverable 3 on the website

- Start implementing a GAN

- Present Ref 3

Week 11 - November 2nd 2021

Pre-Meeting Notes

Potential benchmark

- https://github.com/andrewlewis/camera-id I tried to use this for a while since it implemented the original PRNU method by lukas but there was no environment file and too many dependency clashes caused me to give up

- most likely: https://github.com/RenatoBMLR/Camera-Model-Identification

- if the one above doesn't work out: i'll try this one: https://github.com/lonePatient/kaggle-camera-model-identification

Things to do for this week:

- Do a PSNR comparison with images from my phone and another phone

- Test out a benchmark

Week 10 - October 26th 2021

Pre-Meeting Notes

Potential benchmark

- https://github.com/polimi-ispl/camera-model-identification-with-cnn

- Discuss results from DEL2

Discussed the concerns about how my method will be accurate. Professor explained an experiment that I could do to test my findings. We also discussed methods at which I can find a benchmark. Also need to go back to dev2 sometime and test out if adding plt.gray() makes the noise prints better since they did that in their article. Lukas' article fails with cropping images. I can try resizing and see how that works ref: A_Survey_on_Digital_Camera_Image_Forensic_Methods

Things to do for this week:

- Find a benchmark and test it

Week 9 - October 19th 2021

Discussed the finding I had with the code I used to generate different denoise images. I found the bior3.5 wavelets had the most consistent results with producing denoised images. I didn't get the chance to test out noise generation methods as I had doubts with the scoring method. After discussing this with Chris, I determined that I would use AVG(PSNR)/VAR for scoring the denoised images and AVG(PSNR) for scoring the noiseprints.

Things to do for this week:

- Finish up DEL2 code and update website

- Find and start working on pre-existing code to categorize images

Week 8 - October 12th 2021

Pre-Meeting Notes

- Share wavelet study notes and code. Also discuss approaches and techniques on how to model noise with professor

- Code is in the folder.php called: denoise_wavelet_personal.ipynb

Gave a brief overview on how wavelets can denoise images. Discussed the scikit-image library. The professor gave me advice on how to use PSNR as a metric to decide how good the noise print without having the true raw image. Since the nature of my project assumes that all images have inherent noise.

Things to do for this week:

- Calculate PSNR ratio on multiple images of the same object using the same denoising method. Each image take turns acting as the target against the other images. Find the max difference between the PSNRs. Then do it all over again but use a different denoising method. The max PSNR difference will indicate how consistent the denoising method is. The bigger the value the worse the method.

- In order to compare methods for finding the noise print (For example uint vs scaled), we take multiple pics of different objects and find the variance between the noise prints between each pics using the same method. Then we use a different method and find the noise prints for the same set of images. The method that produces the smaller variances is the better method.

Week 7 - October 5th 2021

Pre-Meeting Notes

- https://docs.google.com/presentation/d/1ly_VYzL0JL17yGcOhxmHFWWejnYXwQ_bpruRZpoO28c/edit?usp=sharing

Spent most of meeting presenting [2]. Asked Prof. Pollet to clarify once more how wavelet transforms is better for signal reconstruction than Fourier transforms.

Things to do for this week:

- Start coding and getting familiar with the wavelet transform libraries

Week 6 - September 28th 2021

Pre-Meeting Notes

- Will give a brief overview on all my research into the concept of taylor series, Fourier transforms, and wavelets

- Show start of tutorials regarding wavelet transforms

- https://ataspinar.com/2018/12/21/a-guide-for-using-the-wavelet-transform-in-machine-learning/

Sources

Taylor Series

- https://www.youtube.com/watch?v=3d6DsjIBzJ4

Fourier Transforms

- real world coordinates:

https://www.khanacademy.org/science/electrical-engineering/ee-signals/ee-fourier-series/v/ee-fourier-series-intro - complex notation:

https://www.youtube.com/watch?v=mkGsMWi_j4Q - more explanation on scaling and inverse FT:

part 1: https://www.youtube.com/watch?v=1JnayXHhjlg

part 2:https://www.youtube.com/watch?v=kKu6JDqNma8

Wavelets

- https://www.youtube.com/watch?v=uk6nUkd6yQo

- https://www.youtube.com/watch?v=ZnmvUCtUAEE

- https://www.youtube.com/watch?v=MBnnXbOM5S4

Discussed the theory behind taylor series, fourier transforms, and wavelets. Prof. Pollet told me that I could use Wavelet and any type of signal analysis/decomposition methods for any type of data. I was concerned that I would not be able to use wavelets since common applications apply to signals over time, amplitude, and frequency. I can use any type of data as dimensions to decompose over. We also discussed the Heisenberg's uncertainty principle in more detail. It seems that a lot of these electrical engineering concepts can be applied to computer science as well. I was also reminded to try to separate the color channels into three different matrices to reduce dimensions

Things to do for this week:

- Continue to work on dev 2

- Present Ref 2

Week 5 - September 21st 2021

Discussed Paper 1 again to further analyze the specific method of which they extracted sensor noise patterns from their dataset. The best method the authors found was a wavelet based denoising filter. The paper used a package from another paper but we would like to implement a wavelet based filter ourselves. I also plan to experiment with Fourier transformations as they used that to analyze the properties of noise in each pixel row.

Things to do for this week:

- Put deliverables in the sidebar

- Read up and implement a demo of wavelet decomposition

- Read up and implement a demo of Fourier transformation

Week 4 - September 14th 2021

Pre-Meeting Notes

- Didn't get around to finding the z-score for each pixel

- Took the avg of all the pictures and made a filter

- The subtraction of the filter from each image don't output very similar results

- Did not get to compute Z-score yet

Discussed the results of my experiment 1. Assumed that there were more white pixels than expected is because when converted signed ints to unsigned ints, the two-compliment representation causes the conversion to turn small negatives into large numbers. This turns some spots white. On average some spots will be small numbers and other spots will be large numbers (represented by white and black pixels). Also noticed larger blocks of pixels near the edges of the picture and finer grains of noise near the center. This indicates that the camera is more sensitive towards the middle of the lens. It is also possible that whiter images have less noise (larger blocks in the noise map). Also noticed that I save the noise maps as pngs, there is a little discrepancy since the input images are jpgs but adding another layer of compression shouldn't improve the results too much. Also looked at the dataset and discovered that the images are not of the same objects across different camera models.

Things to do for this week:

- Re-read the first article and explain flat fielding and explain how their camera detection algorithms works

- Implement std-dev filter for deliverable 1

- Fix deliverable 1 in proposal

Week 3 - September 7th 2021

Discussed the various ways to take sensor noise patterns and any future complications that the project can hold. Discussed the above dataset and my updated proposal. I presented reference 1.

Things to do for this week:

- Make slides when presenting articles.

- Upload documents you want Pollet to see to the website before the meeting.

- Get started on deliverable 1.

- Set up a tripod with consistent lighting. Take ten pics of a single colored cardboard and use python image library to subtract the average pixel value of the images from each image. See if you can see a noise pattern that is consistent across all ten images. Also try subtracting the standard deviation from all images.

- Fix proposal by removing extra 7 in week 7 and put the calendar in a table.

- Eventually we may be able to distinguish the difference between phones of the same model.

- Download the dataset and inspect it.

Week 2 - August 31th 2021

Discussed further about the topic. Pollet made suggestions on what types of deliverables he would like to see. He also explained what his role would be as my advisor and what he expects of me for CS297

Things to do for this week:

- Make summaries of articles.

- Fill out CS297 proposal.

- Get started on deliverable 1.

Week 1 - August 24th 2021

Met with the rest of his students for the CS297/298 classes and decided when to meet. Made introductions with the other students and got to know each other. Professor suggested this project idea: finding a way to change the sensor data of an image to seem like it was taken from a different camera.

Things to do for this week:

- Find articles about this topic to further increase my knowledge of this subject.

- Begin thinking of deliverables to meet and make a schedule.