N-gram Word Predictor and String Matching Rock Paper Scissors Predictor

Description: The purpose during this phase was to examine a couple of prediction algorithms, one using n-grams and the other using string matching.

A word predictor was developed to examine n-grams. The program was written in C++ using MFC. It consists of an input dialog window and a display window. Its operation is summarized as follows. The user enters some text, clicks on the learn button, and the program will build an n-gram model. The value of n can be specified. Once the model is constructed, it is displayed in the display window. Afterwards, as the user enters more text, the program will use the n-gram model to predict the next word the user is most likely to type. The program can also save and load n-gram models so they don't need to be reconstructed everytime.

Download the n-gram word predictor: NgramWordPredictor.zip

To examine string matching prediction, a simple Rock, Paper, Scissors (RPS) game was made. Two versions were made, one using MFC, and the other being a Java applet. Both versions are identical in their functionality. Basically, the user plays RPS against the computer. The computer makes its move based only on the player's previous moves. The string matching prediction algorithm as described in [Mommersteeg04] is used by the computer. A small survey was taken of three human players who played a combined total of 112 rounds. Of the 112 rounds, the computer won 55 times, 49.1%. This is a 15.8% improvement over the expected outcome if the computer just randomly picks a move, which would be 33.3% wins.

Download the RPS game with string matching prediction Java applet: RPSApplet.jar

Download the RPS game with string matching prediction MFC version: RPSMFC.zip

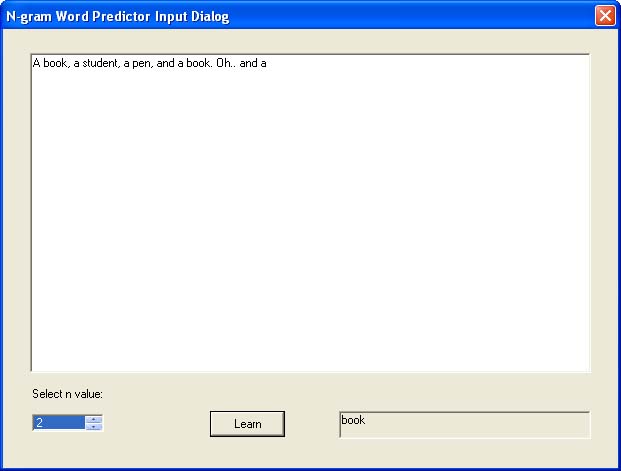

Example: Here is N-gram Word Predictor in action.

The screenshot shows the input dialog. The user has already constructed a model using the following text:

A book, a student, a pen, and a book.

As seen, the user has typed some text afterwards, and at the bottom, the program displays its prediction. Since this is a bi-gram (n = 2), the predicted word is based on only the previous word. In this case, given the training data, the program determined that the most likely word to follow the word, a is the word book.

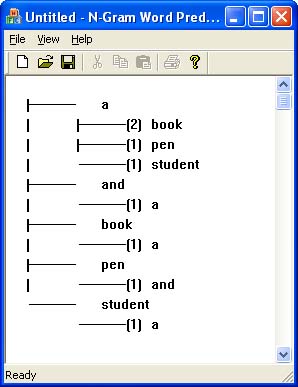

Example: Here is N-gram Word Predictor's n-gram model display.

This screenshot shows the display window. The model is the same one as in the last example; that is, it was constructed using the text:

A book, a student, a pen, and a book.

with a bi-gram model. As seen, the model shows that given the word a, the most frequent next word (seen four times) is the word book.

/******************************************************

* Copyright (c): 2004, All Rights Reserved.

* Project: CS 297 Deliverable 1 N-gram Word Predictor

* File: NgramWordPredictorView.cpp

* Purpose: View class for the word predictor program

* Start date: 8/30/2004

* Programmer: Leo Lee

*

*******************************************************/

#include "stdafx.h"

#include "NgramWordPredictor.h"

#include "NgramWordPredictorDoc.h"

#include "NgramWordPredictorView.h"

#include "DialogNgram.h"

#include "NgramNode.h"

#include ".\ngramwordpredictorview.h"

#ifdef _DEBUG

#define new DEBUG_NEW

#endif

// CNgramWordPredictorView

IMPLEMENT_DYNCREATE(CNgramWordPredictorView, CView)

BEGIN_MESSAGE_MAP(CNgramWordPredictorView, CView)

ON_WM_CREATE()

ON_COMMAND(ID_VIEW_INPUTDIALOG, OnViewInputdialog)

END_MESSAGE_MAP()

// CNgramWordPredictorView construction/destruction

/*****************************************************************************/

CNgramWordPredictorView::CNgramWordPredictorView()

/*

PURPOSE: Constructor for view class. Set's up the input dialog.

*/

{

((CNgramWordPredictorApp*)AfxGetApp())->setMainWindow(this);

m_pinputDialog = new CDialogNgram(this);

}

/*****************************************************************************/

CNgramWordPredictorView::~CNgramWordPredictorView()

/*

PURPOSE: Destructor.

*/

{

delete m_pinputDialog;

}

/*****************************************************************************/

BOOL CNgramWordPredictorView::PreCreateWindow(CREATESTRUCT& cs)

{

// TODO: Modify the Window class or styles here by modifying

// the CREATESTRUCT cs

return CView::PreCreateWindow(cs);

}

/*****************************************************************************/

// CNgramWordPredictorView drawing

static int yy = 0;

static int indent = 50;

static int offset = 20;

void CNgramWordPredictorView::OnDraw(CDC* pDC)

/*

PURPOSE: Draws the display window i.e. the n-gram model, by delegating

task to displayModel().

RECEIVES: pDC - pointer to device context for window.

*/

{

CNgramWordPredictorDoc* pDoc = GetDocument();

ASSERT_VALID(pDoc);

if (!pDoc)

return;

yy = 0;

CArray<bool> drawVertBar;

displayModel(pDC, pDoc->getModel(), 0, &drawVertBar);

}

/*****************************************************************************/

void CNgramWordPredictorView::displayModel(CDC *pDc,

CNgramNode *pnode, int level, CArray<bool> *drawVertBar)

/*

PURPOSE: Displays the n-gram model.

RECEIVES: pDc - device context for window.

pnode - current node in n-gram tree to display.

level - how deep the current node is in the n-gram tree.

drawVertBar - indicates whether a vertical bar should be drawn at

certain parts of the display.

REMARKS: The way the model is drawn is to indent each node's name by a certain offset

dependent on the level of the node.

*/

{

CList<CNgramNode*>* pchildren = pnode->getChildren();

POSITION curChildPos = pchildren->GetHeadPosition();

// draw vertical bar

for(int i = 0; i < level; i++)

{

if(drawVertBar->GetAt(i))

{

pDc->TextOut(i * indent + offset, yy, "|");

}

}

// draw horizontal bar

if(level > 0)

{

for(int i = 3; i < indent - 3; i++)

{

pDc->TextOut((level - 1) * indent + i + offset, yy, "-");

}

}

char str[10] = "";

if(pnode->getCount() != 0)

{

sprintf(str, "(%d)", pnode->getCount());

}

pDc->TextOut(level * indent + offset, yy, str);

pDc->TextOut(level * indent + offset + 25, yy, *pnode->getWord());

yy += 20;

for(int i = 0; i < pchildren->GetSize(); i++)

{

if(i < pchildren->GetSize() - 1)

{

drawVertBar->Add(true);

} else

{

drawVertBar->Add(false);

}

displayModel(pDc, pchildren->GetNext(curChildPos), level + 1, drawVertBar);

drawVertBar->RemoveAt(drawVertBar->GetSize() - 1);

}

}

/*****************************************************************************/

// CNgramWordPredictorView diagnostics

#ifdef _DEBUG

void CNgramWordPredictorView::AssertValid() const

{

CView::AssertValid();

}

/*****************************************************************************/

void CNgramWordPredictorView::Dump(CDumpContext& dc) const

{

CView::Dump(dc);

}

/*****************************************************************************/

CNgramWordPredictorDoc* CNgramWordPredictorView::GetDocument() const

// non-debug version is inline

{

ASSERT(m_pDocument->IsKindOf(RUNTIME_CLASS(CNgramWordPredictorDoc)));

return (CNgramWordPredictorDoc*)m_pDocument;

}

#endif //_DEBUG

/*****************************************************************************/

// CNgramWordPredictorView message handlers

int CNgramWordPredictorView::OnCreate(LPCREATESTRUCT lpCreateStruct)

/*

PURPOSE: Create the input dialog when the window is first created.

RECEIVES: lpCreateStruct - only used in parent method.

REMARKS: The input dialog cannot be created in the constructor because

the window isn't create yet there.

*/

{

if (CView::OnCreate(lpCreateStruct) == -1)

return -1;

m_pinputDialog->Create(IDD_DIALOGNGRAM, this);

m_pinputDialog->ShowWindow(SW_SHOW);

EnableScrollBarCtrl(SB_VERT);

EnableScrollBar(SB_VERT);

SetScrollRange(SB_VERT, 0, 100);

return 0;

}

/*****************************************************************************/

void CNgramWordPredictorView::OnViewInputdialog()

/*

PURPOSE: Handler for menu selection, which displays the input dialog.

*/

{

m_pinputDialog->ShowWindow(SW_SHOW);

}

/******************************************************

* Copyright (c): 2004, All Rights Reserved.

* Project: CS 297 Deliverable 1 N-gram Word Predictor

* File: NgramWordPredictorDoc.cpp

* Purpose: Doc class for the word predictor program

* Start date: 8/30/2004

* Programmer: Leo Lee

*

*******************************************************/

#include "stdafx.h"

#include "NgramWordPredictor.h"

#include "NgramWordPredictorDoc.h"

#include "NgramLearner.h"

#include "NgramPredictor.h"

#include "NgramNode.h"

#ifdef _DEBUG

#define new DEBUG_NEW

#endif

// CNgramWordPredictorDoc

IMPLEMENT_DYNCREATE(CNgramWordPredictorDoc, CDocument)

BEGIN_MESSAGE_MAP(CNgramWordPredictorDoc, CDocument)

END_MESSAGE_MAP()

const char* CNgramWordPredictorDoc::DELIMITERS = " ,.?'\";:[]{}\\|+=-_)(*&^%$#@!~`<>";

/*****************************************************************************/

// CNgramWordPredictorDoc construction/destruction

CNgramWordPredictorDoc::CNgramWordPredictorDoc()

/*

PURPOSE: Constructor, creates the learner, predictor and model to be used.

*/

{

m_plearner = new CNgramLearner();

m_ppredictor = new CNgramPredictor();

m_pmodel = new CNgramNode("");

}

/*****************************************************************************/

CNgramWordPredictorDoc::~CNgramWordPredictorDoc()

/*

PURPOSE: Destructor.

*/

{

delete m_plearner;

delete m_ppredictor;

delete m_pmodel;

}

/*****************************************************************************/

BOOL CNgramWordPredictorDoc::OnNewDocument()

{

if (!CDocument::OnNewDocument())

return FALSE;

clearModel();

// (SDI documents will reuse this document)

return TRUE;

}

/*****************************************************************************/

// CNgramWordPredictorDoc serialization

void CNgramWordPredictorDoc::Serialize(CArchive& ar)

/*

PURPOSE: Saves the n-gram model or loads model from a file.

RECEIVES: The stream to read from or write to.

*/

{

if (ar.IsStoring())

{

m_pmodel->Serialize(ar);

}

else

{

clearModel();

m_pmodel = new CNgramNode("");

m_pmodel->Serialize(ar);

}

}

/*****************************************************************************/

// CNgramWordPredictorDoc diagnostics

#ifdef _DEBUG

void CNgramWordPredictorDoc::AssertValid() const

{

CDocument::AssertValid();

}

/*****************************************************************************/

void CNgramWordPredictorDoc::Dump(CDumpContext& dc) const

{

CDocument::Dump(dc);

}

#endif //_DEBUG

// CNgramWordPredictorDoc commands

void CNgramWordPredictorDoc::learn(CDialogNgram *pinputDialog)

/*

PURPOSE: This function gets the n-value and text from the given input dialog,

then invokes the learner to modify the n-gram model based on this

new information.

RECEIVES: pinputDialog - The input dialog to get the text from to learn.

*/

{

pinputDialog->UpdateData(TRUE);

CString text;

pinputDialog->getTextArea()->GetWindowText(text);

int n = pinputDialog->getNSelect()->GetCurSel() + 1;

m_plearner->learn(m_pmodel, n, text);

UpdateAllViews(0);

}

/*****************************************************************************/

CString CNgramWordPredictorDoc::predict(CDialogNgram *pinputDialog)

/*

PURPOSE: Predicts the next word based on the previous n - 1 words

in the text area in pinputDialog.

RECEIVES: pinputDialog - The input dialog to get the text from to do the prediction.

RETURNS: The predicted next word.

*/

{

pinputDialog->UpdateData(TRUE);

CString text;

pinputDialog->getTextArea()->GetWindowText(text);

int n = pinputDialog->getNSelect()->GetCurSel() + 1;

return m_ppredictor->nextWord(m_pmodel, n, text);

}

/*****************************************************************************/

void CNgramWordPredictorDoc::clearModel()

/*

PURPOSE: Removes all children nodes from the root n-gram model node;

in effect, wiping out the current n-gram model.

*/

{

delete m_pmodel;

m_pmodel = new CNgramNode("");

UpdateAllViews(0);

}

/******************************************************

* Copyright (c): 2004, All Rights Reserved.

* Project: CS 297 Deliverable 1 N-gram Word Predictor

* File: NgramPredictor.cpp

* Purpose: Responsible for doing the prediction.

* Start date: 8/30/2004

* Programmer: Leo Lee

*

*******************************************************/

#include "stdafx.h"

#include "NgramWordPredictor.h"

#include "NgramPredictor.h"

#include "NgramNode.h"

#include "NgramWordPredictorDoc.h"

/*****************************************************************************/

// CNgramPredictor

CNgramPredictor::CNgramPredictor()

{

}

/*****************************************************************************/

CNgramPredictor::~CNgramPredictor()

{

}

/*****************************************************************************/

CString CNgramPredictor::nextWord(CNgramNode *pmodel, int n, CString prevWords)

/*

PURPOSE: Predicts the most likely next word based on the n-gram model

and the previous n - 1 words in prevWords.

RECEIVES: pmodel - The root node of the n-gram model.

n - The n value of the n-gram e.g. n == 3 for a tri-gram

prevWords - The previous entered text to base the prediction on.

RETURNS: The predicted next work, or "" (empty string) if the next

next word cannot be predicted i.e. prevWords does not contain

at least n - 1 words.

*/

{

// Get the last n - 1 words

// First reverse all the text

prevWords += " ";

prevWords.MakeReverse();

CString segment;

CString curWord;

int tokenPos = 0;

// Then get the last n - 1 words that are reversed

for (int i = 0; i < n - 1; i++)

{

curWord = prevWords.Tokenize(CNgramWordPredictorDoc::DELIMITERS, tokenPos);

if (curWord == "") return "";

// Not enough words to predict: need at least n - 1 words

segment += curWord + " ";

}

// Finally reverse the reversed words I got

segment.MakeReverse();

// Now we can traverse the n-gram tree and find the most probably next word

// First let's get to the level right before the leaf nodes

CNgramNode *curNode = pmodel;

tokenPos = 0;

for (int i = 0; i < n - 1; i++)

{

curNode = curNode->getChild(segment.Tokenize(CNgramWordPredictorDoc::DELIMITERS,

tokenPos).MakeLower());

if (curNode == 0) return "";

}

// Now we find the most likely next node from curNode's children i.e. highest count

UINT highCount = 0;

CNgramNode *predictNode = 0;

CList<CNgramNode*> *pchildren = curNode->getChildren();

POSITION curChildPos = pchildren->GetHeadPosition();

for (int i = 0; i < pchildren->GetSize(); i++)

{

curNode = pchildren->GetNext(curChildPos);

if (curNode->getCount() > highCount)

{ // Found a more likely node

highCount = curNode->getCount();

predictNode = curNode;

}

}

return *predictNode->getWord();

}

/******************************************************

* Copyright (c): 2004, All Rights Reserved.

* Project: CS 297 Deliverable 1 N-gram Word Predictor

* File: NgramLearner.cpp

* Purpose: Responsible for doing the learning.

* Start date: 8/30/2004

* Programmer: Leo Lee

*

*******************************************************/

#include "stdafx.h"

#include "NgramWordPredictor.h"

#include "NgramLearner.h"

#include "NgramNode.h"

#include "NgramWordPredictorDoc.h"

/*****************************************************************************/

// CNgramLearner

CNgramLearner::CNgramLearner()

{

}

/*****************************************************************************/

CNgramLearner::~CNgramLearner()

{

}

/*****************************************************************************/

void CNgramLearner::learn(CNgramNode *pmodel, int n, CString text)

/*

PURPOSE: Learns by building an n-gram tree model with the given text for

the given n value.

RECEIVES: pmodel - The root node of the current n-gram model.

n - The value of n of the n-gram i.e. n == 3 for a tri-gram.

text - The text to build the n-gram model with.

*/

{

int curTextPos = 0; // the position of the word next segment should start with

CList<CString> curSegment;

CString curWord = text.Tokenize(CNgramWordPredictorDoc::DELIMITERS, curTextPos);

if (curWord == "") return; // No words in text

// Grab the first segment of words

curTextPos = 0;

do

{

curWord = text.Tokenize(CNgramWordPredictorDoc::DELIMITERS, curTextPos);

curSegment.AddTail(curWord.MakeLower());

} while (curSegment.GetSize() < n && curWord != "");

if (curWord == "")

{ // Not enough words yet to build n-gram model

return;

}

CNgramNode *pcurNode;

CNgramNode *ptempNode;

POSITION curPosInSeg;

CString curSegWord;

do

{

// Go down the n-gram model tree and increment the counter at the leaf

curPosInSeg = curSegment.GetHeadPosition();

pcurNode = pmodel;

for (int i = 0; i < n; i++)

{

curSegWord = curSegment.GetNext(curPosInSeg);

ptempNode = pcurNode->getChild(curSegWord);

if (!ptempNode)

{ // I don't have this node yet, so make it

bool aa = curSegWord == "c";

ptempNode = pcurNode->addChild(curSegWord);

}

pcurNode = ptempNode;

}

// Increment the counter at the leaf

pcurNode->incCount();

// Get the next segment and repeat

curWord = text.Tokenize(CNgramWordPredictorDoc::DELIMITERS, curTextPos);

curSegment.RemoveHead();

curSegment.AddTail(curWord.MakeLower());

} while (curWord != "");

}

/******************************************************

* Copyright (c): 2004, All Rights Reserved.

* Project: CS 297 Deliverable 1 N-gram Word Predictor

* File: NgramNode.cpp

* Purpose: A node in the n-gram tree.

* Start date: 8/30/2004

* Programmer: Leo Lee

*

*******************************************************/

#include "stdafx.h"

#include "NgramWordPredictor.h"

#include "NgramNode.h"

#include ".\ngramnode.h"

/*****************************************************************************/

// CNgramNode

CNgramNode::CNgramNode(const CString &word, UINT count)

{

m_word = word;

m_count = count;

}

/*****************************************************************************/

CNgramNode::~CNgramNode()

{

POSITION pos = m_children.GetHeadPosition();

for (int i = 0; i < m_children.GetSize(); i++)

{

delete m_children.GetNext(pos);

}

}

/*****************************************************************************/

CNgramNode *CNgramNode::getChild(const CString &word)

/*

PURPOSE: Returns the n-gram node that is a child of this node with the given word.

RECEIVES: word - The word that the desired child contains.

RETURNS: The child node with the given word if it exists, else null.

*/

{

POSITION pos = m_children.GetHeadPosition();

CNgramNode *curNode;

for (int i = 0; i < m_children.GetSize(); i++)

{

curNode = m_children.GetNext(pos);

if (word == *curNode->getWord())

{ // Found the word

return curNode;

}

}

return 0; // no child node with this word

}

/*****************************************************************************/

CNgramNode *CNgramNode::addChild(const CString &word)

/*

PURPOSE: Adds a child node to this node with the given word.

RECEIVES: word - The word that the new child node will contain.

RETURNS: Pointer to the new child node.

*/

{

POSITION prevPos;

POSITION pos = m_children.GetHeadPosition();

for (int i = 0; i < m_children.GetSize(); i++)

{

prevPos = pos;

if (word < *m_children.GetNext(pos)->getWord())

{ // Found where word belongs

if (prevPos == m_children.GetHeadPosition())

{ // word belongs at head

m_children.AddHead(new CNgramNode(word));

return m_children.GetHead();

} else

{ // word belongs somewhere in the middle

m_children.InsertBefore(prevPos, new CNgramNode(word));

m_children.GetPrev(prevPos);

return m_children.GetAt(prevPos);

}

}

}

// Reached the end of the list, so word must belong at the tail

m_children.AddTail(new CNgramNode(word));

return m_children.GetTail();

}

/*****************************************************************************/

void CNgramNode::Serialize(CArchive& ar)

/*

PURPOSE: Save or load this node, and recursively, all of its children.

RECEIVES: ar - stream to read from or write to.

*/

{

int numChildren;

POSITION pos;

int size;

if (ar.IsStoring())

{ // storing code

size = m_children.GetSize();

ar << m_word << m_count << size;

pos = m_children.GetHeadPosition();

for (int i = 0; i < m_children.GetSize(); i++)

{

(m_children.GetNext(pos))->Serialize(ar);

}

}

else

{ // loading code

m_children.RemoveAll();

ar >> m_word >> m_count;

ar >> numChildren;

for (int i = 0; i < numChildren; i++)

{

CNgramNode *pnode = new CNgramNode("");

pnode->Serialize(ar);

m_children.AddTail(pnode);

}

}

}

;

Example:This is the Rock Paper Scissors applet. Have fun!

Select either rock, paper, or scissors and click the appropriate button on the left. The computer will then make its move and the scores will be updated depending on the outcome. A white score means that side won the round, black means that side lost, and blue means there was a tie. To see what is going on under the hood with the string matching predictor, check the "Show Sequence" checkbox. Two strings will be displayed. Prediction is simply what the computer predicts to be the next move you will make. The rather long sequence string are your most recent previous moves, each with a number to the right of it. R is rock, P is paper, and S is scissors. The number is the string match size for that element, see [MommerSteeg04] for more detail. Basically if you find the largest string match size number, the move after is the prediction.

/******************************************************

* Copyright (c): 2004, All Rights Reserved.

* Project: CS 297 Deliverable 1, Rock, Paper, Scissors string match predictor

* File: StringPredictor.java

* Purpose: Handles the string matching prediction.

* Start date: 9/15/2004

* Programmer: Leo Lee

*

*******************************************************/

import java.util.ArrayList;

public class StringPredictor

{

public StringPredictor()

/*

PURPOSE: Constructor.

*/

{

m_maxMatchIndex = 0;

m_maxMatchSize = 0;

m_sequence = new ArrayList();

m_histogram = new ArrayList[3];

for(int i = 0; i < 3; i++)

{

m_histogram[i] = new ArrayList();

}

}

public void addEntry(int move)

/*

PURPOSE: Adds a new entry to the sequence of observed moves.

This will update both the sequence and histogram.

RECEIVES: move - The move of the new entry to add.

*/

{

m_maxMatchSize = 0;

for (int i = m_histogram[move].size() - 1; i >= 0; i--)

{ // update the counts for all entries of type move in sequence

int curEntryIndex = ((Integer)m_histogram[move].get(i)).intValue();

int curEntryCount = 1; // If this is first entry its count is 1

if (curEntryIndex != 0)

{ // Only go in here if this isn't the first entry

curEntryCount = ((RPSEntry)m_sequence.get(curEntryIndex - 1)).getCount() + 1;

}

((RPSEntry)m_sequence.get(curEntryIndex)).setCount(curEntryCount);

if (curEntryCount > m_maxMatchSize)

{

m_maxMatchSize = curEntryCount;

m_maxMatchIndex = curEntryIndex;

}

}

m_histogram[move].add(new Integer(m_sequence.size()));

m_sequence.add(new RPSEntry(move));

}

int predictNextMove()

/*

PURPOSE: Returns the predicted next move in the sequence.

RETURNS: The predicted next move.

*/

{

if(m_maxMatchSize > 0)

{

return ((RPSEntry)m_sequence.get(m_maxMatchIndex + 1)).getMove();

}

return RPSEntry.UNKNOWN;

}

int getLastMove()

/*

PURPOSE: Returns the last move made by the player.

RETURNS: Last move made by the player.

*/

{

return ((RPSEntry)m_sequence.get(m_sequence.size() - 1)).getMove();

}

ArrayList getSequence()

/*

PURPOSE: Returns the sequence.

RETURNS: The sequence.

*/

{

return m_sequence;

}

// Fields

private ArrayList m_sequence; // of RPSEntry

private ArrayList m_histogram[];

private int m_maxMatchIndex;

// index in sequence where end of largest match is

private int m_maxMatchSize; // the actual length of the largest match

}

/******************************************************

* Copyright (c): 2004, All Rights Reserved.

* Project: CS 297 Deliverable 1, Rock, Paper, Scissors string match predictor

* File: RPSEntry.java

* Purpose: A node in the sequence, consists of a move and count.

* Start date: 9/15/2004

* Programmer: Leo Lee

*

*******************************************************/

public class RPSEntry

{

public RPSEntry(int move)

/*

PURPOSE: Constructor.

*/

{

m_move = move;

m_count = 1;

}

public void setCount(int count)

/*

PURPOSE: Mutator for count variable.

RECEIVES: The new value of count.

*/

{

m_count = count;

}

public int getCount()

/*

PURPOSE: Accessor for count.

RETURNS: The count for this entry.

*/

{

return m_count;

}

int getMove()

/*

PURPOSE: Get the move for this entry.

RETURNS: The move for this entry.

*/

{

return m_move;

}

public String toString()

/*

PURPOSE: Returns the string representation of this entry's move.

RETURNS: The string representation of this entry's move.

*/

{

if (m_move == ROCK)

{

return "Rock";

} else if (m_move == PAPER)

{

return "Paper";

} else if (m_move == SCISSORS)

{

return "Scissors";

} else

{

return "Unknown";

}

}

public String toSmallString()

/*

PURPOSE: Returns the string representation of this entry, move and count.

RETURNS: The string as described.

*/

{

if (m_move == ROCK)

{

return "R" + m_count;

} else if (m_move == PAPER)

{

return "P" + m_count;

} else if (m_move == SCISSORS)

{

return "S" + m_count;

} else

{

return "U" + m_count;

}

}

// Some constants

public static final int ROCK = 0;

public static final int PAPER = 1;

public static final int SCISSORS = 2;

public static final int UNKNOWN = 3;

// Fields

private int m_move;

private int m_count;

}

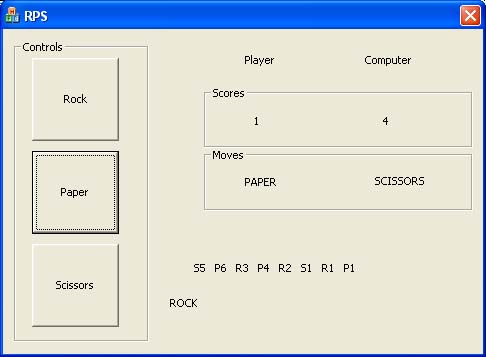

Example:This is a screenshot of the MFC Rock Paper Scissors.

This is basically the same thing as the Java applet seen above with two slight differences. First, this version uses text instead of graphics for the buttons. Second, this version has the sequence shown the whole time. Basically this version was developed first, so the applet is an improved version.