Language Modeling, Test Collections, Open-Source IR Systems, Inverted Indexes

CS267

Chris Pollett

Aug. 29, 2011

CS267

Chris Pollett

Aug. 29, 2011

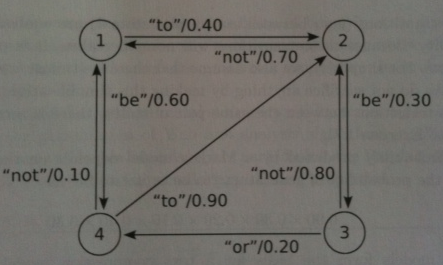

Let's recall the notion of higher-order language model we introduced on Monday ...

Exercise 1.4 Starting in an unknown state, the Markov model above generates "to be". What state or states could be the current state of the model after generating this text?

Answer. In the above diagram, only the states 1 and 4 have a non-zero probability transition on the word "to". For both of these states, there is exactly one non-zero probability transition on this word, and it goes to state 2. From state 2, there is exactly one non-zero probability transition on the word "be" and it is to state 3. Therefore, starting in an unknown state, if the Markov model generates "to be", it must be in state 3.

<DOC> <DOCNO>LA051990-0141<DOCNO> <HEADLINE>COUNCIL VOTES TO EDUCATE DOG OWNERS</HEADLINE> <P> The City Ccouncil stepped carefully around enforcement of the dog-curbing ordinance this week, vetoing the use of police to enforce the law. </P> ... </DOC>

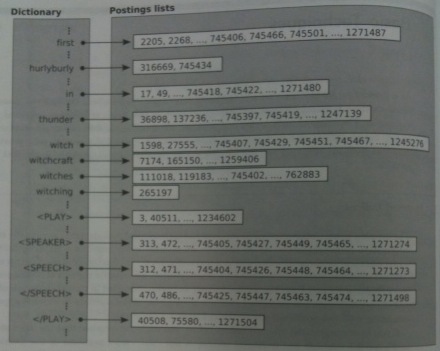

nextPhrase(t[1],t[2], .., t[n], position)

{

v:=position

for i = 1 to n do

v:= next(t[i], v)

if v == infty then // infty represents after the end of the posting list

return [infty, infty]

u := v

for i := n-1 downto 1 do

u := prev(t[i],u)

if(v-u == n - 1) then

return [u, v]

else

return nextPhrase(t[1],t[2], .., t[n], u)

}