Learning to Crawl, Inverted Indices

CS267

Chris Pollett

Sep. 7, 2011

CS267

Chris Pollett

Sep. 7, 2011

sudo apt-get install curl

sudo apt-get install apache2

sudo apt-get install php5

sudo apt-get install php5-cli

sudo apt-get install php5-sqlite

sudo apt-get install php5-curl

sudo apt-get install php5-gd

php fetcher.php terminalin the other type:

php queue_server.php terminalBe aware that for this to work the php command has to be in your path environment variable; otherwise, you need to give the full path to php.

export JAVA_HOME=/System/Library/Frameworks/JavaVM.framework/Versions/1.6/Home

<property> <name>http.agent.name</name> <value>My Spider</value> </property>

+^http://([a-z0-9]*\.)*nutch.apache.org/would allow the crawler to crawl nutch.apache.org and its sub domains.

mkdir -p urlsThen under this urls folder make a text file listing the start urls you would like to crawl from, one line per url.

bin/nutch crawl urls -dir crawl -depth 3 -topN 5

java -jar start.jar

http://localhost:8983/solr/admin http://localhost:8983/solr/admin/stats.jspAs you can see, this jar had a simple example search server that can be created with solr.

cp ${NUTCH_RUNTIME_HOME}/conf/schema.xml ${APACHE_SOLR_HOME}/example/solr/conf/

bin/nutch solrindex http://127.0.0.1:8983/solr/ crawl/crawldb crawl/linkdb crawl/segments/*

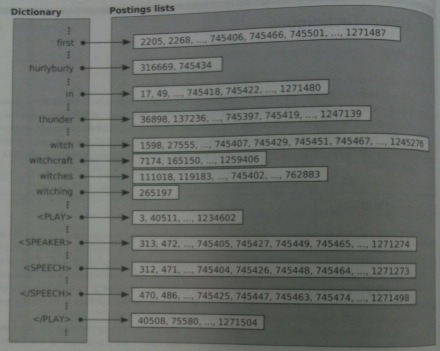

nextPhrase(t[1],t[2], .., t[n], position)

{

v:=position

for i = 1 to n do

v:= next(t[i], v)

if v == infty then // infty represents after the end of the posting list

return [infty, infty]

u := v

for i := n-1 downto 1 do

u := prev(t[i],u)

if(v-u == n - 1) then

return [u, v]

else

return nextPhrase(t[1],t[2], .., t[n], u)

}