Outline

- Byzantine Agreement Protocol

- Quiz

- Map Reduce and PRAMs

Randomized Algorithm for Byzantine Agreement

- On Wednesday, we began going over the Byzantine Agreement Problem, we are now going to discuss one solution.

- In Byzantine Agreement Problem, we have `n` asynchronous processors (generals) which may communicate in rounds their votes on whether to carry

out some action (attack/not-attack).

- At the end each round, each processor sends its vote to each other processor.

- Some number `t` of processors may be faulty.

- We want a protocol such that after some number of rounds all the good processors agree on an action. Further, if at the start of the procedure all the good

processors were thinking the same thing, then at the end of our protocol they wouldn't have changed their decision.

- We will assume that at the start of each round a trusted third party flips a fair coin.

- Any of the processors have access to this coin.

- We will show having access to such a coin will allow us to solve the problem in an expected constant number of rounds.

- We will assume that the number of faulty processors is a number `t < n/8`.

- Each round a good processor sends the same vote to all the other processors.

- A faulty processor may send arbitrary or even inconsistent votes to each other processor.

- Let `L=((5n)/8)+1` , `H=((3n)/4)+1` , and `G=(7n)/8`.

What the ith Processor does (if it is good).

Input: A value for b[i], our current decision choice.

Output: A decision d[i].

1. vote = b[i].

2 For each round, do

3. Broadcast vote;

4. Receive votes from all the other processors.

5. Set maj = majority (0 or 1) value among the votes cast

6. Set tally = the number of votes that maj received.

7. If coin = heads then set threshold = L; else set threshold = H

8. If tally >= threshold then set vote = maj; else vote = 0

9. If tally >= G then set d[i] = maj permanently.

Analysis

- First, if all processors begin the round with the same vote, then 9 will apply and so this value will be the value eventually settled upon.

- Suppose the processors begin the round with different values for the vote.

- If two processors compute different values for maj in Step 5, since at most 1/8 of the processors are faulty, the tally does not exceed threshold regardless of whether L or H was chosen as threshold. So all good processors would set their votes to 0 and an agreement would be reached.

- We say a faulty processor foils a threshold `x in {L,H}` in a round if, by sending different messages to the good processors, they cause tally to exceed `x` for at least one good processor, and to be no more than `x` for at least one good processor.

- Since the difference between the two possible thresholds is at least `t`, the faulty processor can foil at most one threshold in a round.

- Since the threshold is chosen with equal probability from `{L,H}`, it is foiled with probability at most `1/2`.

- Thus, the expected number of rounds before we have an unfoiled threshold is at most `2`. If the threshold is not foiled then all good processors compute the same vote in Step 8.

- Since 7/8th of the processors are good, this ensure that by at most the next round 9 applies.

Quiz

Which of the following statements is true?

- We showed in our analysis of the parallel MIS problem that if a vertex was good the odds that one of its

neighbors was marked was at least `1- exp(-1/6)`.

- Our synchronous CCP protocol made explicit use of timestamps.

- We showed it was impossible for case 2 (c) of the Asynchronous CCP protocol to ever occur.

Parallel and Distributed Algorithms so Far

- So far we have looked two models for parallel processing: Dynamic Multithreading concurrency platforms and PRAMs.

- We have just considered two distributed algorithms one for Async-CCP and one for Byzantine Agreement.

- These can all be viewed as "software-oriented" in some sense: PRAM algorithms being machine code to be run on machines with many processors.

- There are also "hardware" oriented models such as circuits or sorting networks. These might consist of networks which are built-out of gates.

- In the circuit setting the gates might compute boolean functions of their inputs. I.e., they might compute AND, OR, or NOT gates. In the sorting setting, the gates

might compare two inputs and output the input values in sorted order.

- The network or circuit is then a directed acyclic graph. Inputs are variables attaches to nodes of in-degree 0, outputs are nodes of out-degree 0.

- The "time/level" at which a gate can operate is when all of its inputs have acquired a value. This would be some constant times the length of the

longest path to a gate.

- Gates of the same level can operate in parallel.

- Although both circuits and sorting networks are important models of parallel computation we won't talk about them more this semester.

- Instead, we are going to introduce a more recent famous parallel distributed model of computation.

Map Reduce

- Map Reduce was first described in a published paper by Dean and Ghemawat in 2004, but

had been used at Google for some time before this.

- At a high-level, the basic set up of map reduce is a multi-round computation.

- In a given round, jobs are mapped to various machines.

- These machines then perform some action on the mapped data.

- The machines then perform a "shuffle" phase in which data might be grouped by a key value and then sent to a reducer machine.

- The reducer does some operation on the grouped values to produce a result for the next round, or maybe a final result.

- Karloff, Suri, and Vassilvitskii in 2010 have formalized this model more precisely and

shown that a class of PRAM algorithms which can be simulated by Map Reduce and have also shown to what extent MapReduce jobs can be simulated by PRAMs.

- Next day, I would like to go over their proof. For the rest of today, I would like to go over some examples, and some background definitions.

Example of How Map Reduce Might be Useful

- Suppose we run a online video streaming service, where we allow users to upload videos of various lengths.

- Videos might not get uploaded in a format which is convenient for streaming, so your site recodes the videos.

- Recoding is expensive and reasonably slow, so you don't want to do it on a single machine.

- Even if you map each uploaded video to a collection of machines for recoding, if someone uploads a long video, you don't want to hog the

resources of a single machine to only recode that video while other machines go idle.

- To solve these problems, when videos are uploaded, you first segment them into equal size chunks. So you have a mapper that maps

input files to machines for segmentation. Each segment produced has a key consisting of what video and where in that video it belonged to.

- Then these segments are then mapped to machines in a load balanced for recoding.

- In a shuffle phase (key, (recoded video segments)) are sent and grouped together on a reducer machine.

- The reducer then joins these segments to produce a total recoded video.

The Basic Framework

- MapReduce was inspired by the map and reduce functions found in functional programming languages such as Lisp.

- The map function takes as its argument a function `f` and a list of elements `l = langle l_1, ..., l_n rangle`. It returns a new list

`map(f,l) = langle f(l_1), ..., f(l_n) rangle`.

- The reduce function takes a function `g` and a list of elements `l = langle l_1, ..., l_n rangle`. It returns a new element `l'` such that

`l' = \r\e\d\u\c\e(g,l) = g(l_1,g(l_2, g(l_3, ... )))`.

- From a high-level view, a MapReduce programs creates a sequence of key/value pairs, performs some computations on them, and outputs another sequence of key/value pairs.

- Keys and values are often strings, but may be of any data type.

Distinct Phases of a MapReduce Job

- MapPhase, key/value pairs are read from the input and the map function is applied to each of them individually. The function is of the general form:

`map: langle k, v rangle |-> langle langle k_1, v_1 rangle, langle k_2, v_2 rangle, ...,rangle`

- Shuffle Phase, the pairs produced during the map phase are sorted by their key, and all values for the same key are grouped together.

- Reduce Phase, the reduce function is applied to each key and its values.

`\r\e\d\u\c\e: langle k, langle v_1, v_2, ... rangle rangle |-> langle k, langle v_1', v_2', ... rangle rangle`

That is, for each key the reduce function processes the list of associated values and outputs another list of values. The output values and their number may not be the same as the input values.

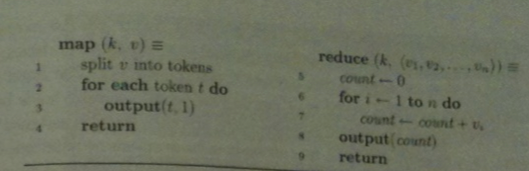

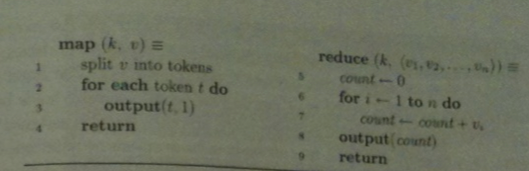

Example MapReduce Job for Counting

- In the job below, we want to produce the number of each type of token in some collection of documents. I.e., for each word in these documents the number of occurrences of that word.

- Our map sends the different documents to different machines.

- `k` is a key for the document, `v` is its text. On a machine, we then split the document into tokens (words) and output pairs `(t,1)`.

- During a shuffle step, all pairs with the same value of `t` would get sent to the same machine and grouped together in a list.

- Then the reduce procedure below would then count the `1`'s in this list.

Parallelizing Map Reduce

- Both Map and Reduce jobs can be parallelized, that is, executed on many machines.

- Map jobs are typically broken into smaller pieces called map shards each of 16 or 64 MB of data.

- These are treated independently.

- The output of these then is broken into reduce shards and sent to reduce workers.

- Assignment of a key-value to a given reduce shard can be done by applying a hash value to its key.

Combiners

- In many MapReduce jobs, a single map shard may produce a large number of key/value pairs for the same key. For example, the word "the" might account for 6-7% of the output in our word counting example.

- Forwarding all these tuples to the reduce worker responsible for "the" wastes network and storage resources and also causes load imbalances.

- To overcome this problem each map worker can also do the shuffle/reduce phase on its portion then send the result to the corresponding reduce worker.

- This kind of reduce that is applied to a map shard is called a combiner.

Fault Tolerance

- If a machine computing a map shard fails the key-values can be sent to a new machine and that shard can be recomputed without having to recompute any of the other map shard jobs.

- At the reduce level, a given reduce shard might depend on keys from several map jobs.

- To prevent having to recompute each of these dependencies on failure, typically, the inputs to a reduce shard are stored in distributed file system, so in the event of a failure they can be re-read from there.

Mappers and Reducers - (Formal Definition)

Recall a multiset is an unordered collection of objects where repeats are allowed.

Definition. A mapper is a (possibly randomized) function that takes as input one ordered `langle key; value rangle` pair of binary strings.

As output the mapper produces a finite multiset of new `langle key; value rangle` pairs.

Definition. A reducer is a (possibly randomized) function that takes as input a binary string `k` which is the key, and a sequence of values `v_1, v_2, ...` which are also binary strings. As output, the reducer produces a multiset of pairs of binary strings `langle k; v_(k,1)rangle , langle k; v_(k,2)rangle, langle k; v_(k,3)rangle, ...` The key in the output tuples is identical to the key in the input tuple.

So we allow mappers to manipulate keys arbitrarily, but reducers cannot change the keys at all.

A Map Reduce Program

- A map reduce program, `P`, consists of a sequence `langle m[1], r[1], m[2], r[2], ..., m[R], r[R] rangle` of mappers and reducers.

- The program input is a multiset of `langle key; value rangle` pairs denoted by `U[0]`.

- On input `U[0]`, `P`, executes as follows:

For i = 1, 2, ..., R do:

1. EXECUTE MAP: Feed each pair (k;v) in U[i-1] to mapper m[i], and run it.

This generates a sequence (k[1]; v[1]), (k[2]; v[2]),... Let U'[i]

be the multiset of (key; values) pairs output by m[i], that is

U'[i] = union over (k;v) in U[i-1] of m[i]((k;v)).

2. SHUFFLE: For each k, let V[k][i] be the multiset of values v[j]

such that (k;v[j]) is in U'[i]. I.e., in this step we compute the

array V[k][i]

3. EXECUTE REDUCE: For each k, feed k and some arbitrary permutation

of V[k][i] to a separate instance of reducer r[i] and run it.

The reducer will generate a sequence of tuples (k; v'[1]), (k; v'[2]),...

Let U[i] be the multiset of (key; value) pairs output by r[i]. That is,

U[i] = union over k of r[i]((k; V[k][i]))

- The computation halts after the last reducer halts.

- The point of this set up is that it makes parallelism easy:

- Since each mapper `m[i]` only operates on one tuple at a time, the system can have many instances

of of `m[i]` operating on different tuples in `U[i-1]` in parallel.

- After mapping, the system partitions the tuple output based on their key.

- Since the reducer `r[i]` only operates on one part of this partition, the system can have many instances of `r[i]`

running on different parts in parallel.

Assumptions of the Model

- Map Reduce jobs are used when we have severe restrictions on memory, processing, and time. Let's consider these briefly

before we define the class MRC.

- Memory

- We assume the input of the whole map reduce program is too big to fit into memory on any single machine.

That is, we require that the input to any mapper or reducer be sublinear in the size of the data. This prevents the model from being trivial

where we just have a single mapper and reducer on the same machine.

- Machines

- An algorithm that require `n^3` machines in the size `n` of the web would not be practical. So we assume the

number of machines is sublinear in the data size.

- Time

- We do not restrict the power of an individual reducer, but we require that both the map and the reduce functions run in

time polynomial in the original input length. We are also more interested in programs that require a small number of map reduce rounds,

because shuffling is a time consuming operation.

The Map Reduce Class.

Given a program input, a sequence of pairs `(k[j], v[j])`, for `j=1,2,3,...` where `k[j]` and `v[j]` are binary strings,

we define the length of this input to be `n = sum_j(|k[j]| + |v[j]|)`, where `|a|` denotes the length of the binary string `a`.

Definition. Fix an `epsilon > 0`. An algorithm in `MRC^k` consists of a sequence

`langle m[1], r[1], m[2], r[2], ..., m[R], r[R] rangle` of operations which outputs the correct answer with probability at least `3/4` where:

- Each `m[i]` is a randomized mapper implemented by a RAM with `O(log n)` length words, that uses `O(n^(1-epsilon))` space

and time polynomial in `n`.

- Each `r[i]` is a randomized reducer implemented by a RAM with `O(log n)`-length words, that uses `O(n^(1-epsilon))` space

and time polynomial in `n`.

- The total space `sum_((k;v) in U'[i])(|k| +|v|)` used by `(key; value)` pairs output by `m[i]` is `O(n^(2-2epsilon))`

- The number of rounds `R= O(log^k n)`.

We define `MRC = cup_k MRC^k` and we define `DMRC` in an analogous fashion where we require our machines and the above operations to be deterministic.

One key thing to note is we allow the mappers and reducers to run in time polynomial in `n` not polynomial in the length of the input they receive.

DMRC is in P

Theorem. Languages in DMRC can be decided by RAMs running in polynomial time and using at most `O(n^2 log n)` space.

Proof. The idea is that we just want to compute all of the map reduce steps on a single machine. Note each mapper or reducer

from the definition runs in at most polynomial time and uses at most sublinear in `n` space. We require the space used by these

machines in round `i` to be sub-linear in the original input, not in the output of round `i-1`. In a given round

the total output is sub-quadratically many key value pairs. Let `p(n)` be a polynomial bounding the run time of any mapper or reducer.

So we could run each mapper on a single machine in a serial fashion, get their total outputs and use those to run each reducer serially on these outputs to generate the input for the next round. To simulate a single round would take time `O(n^2 cdot p(n))`, simulating all rounds would take time `O(log^k n cdot n^2 cdot p(n))`. We only need to keep the previous rounds output in memory at an given time, so we get the space bound.

Connections between NC and DMRC

The paper shows the following result using a padding argument on a version of the CIRCUIT VALUE PROBLEM. We skip the proof but state the result:

Theorem. If `P ne NC` then `DMRC` is not contained in `NC`.