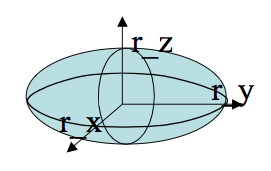

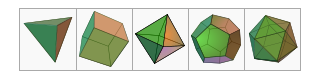

Finish Textures, Polyhedra, Quadrics, Fractals

CS116b/CS216

Chris Pollett

Feb 12, 2014

CS116b/CS216

Chris Pollett

Feb 12, 2014

glBegin(GL_TRIANGLE_FAN); // a sequence of glVertex* (here * is the type like f for float) calls glEnd();Other possibilities are: GL_TRIANGLES, GL_TRIANGLE_STRIP, GL_TRIANGLE_FAN, GL_QUADS, etc.